The Video Egress Problem

Video is the most data-intensive workload in consumer cloud infrastructure. A 90-minute film at 1080p H.264 is approximately 4 to 6 GB. A 20-minute edtech lecture at 720p is approximately 500 MB to 1 GB. Every view of every piece of content generates that much data leaving your storage infrastructure and travelling to the viewer's device.

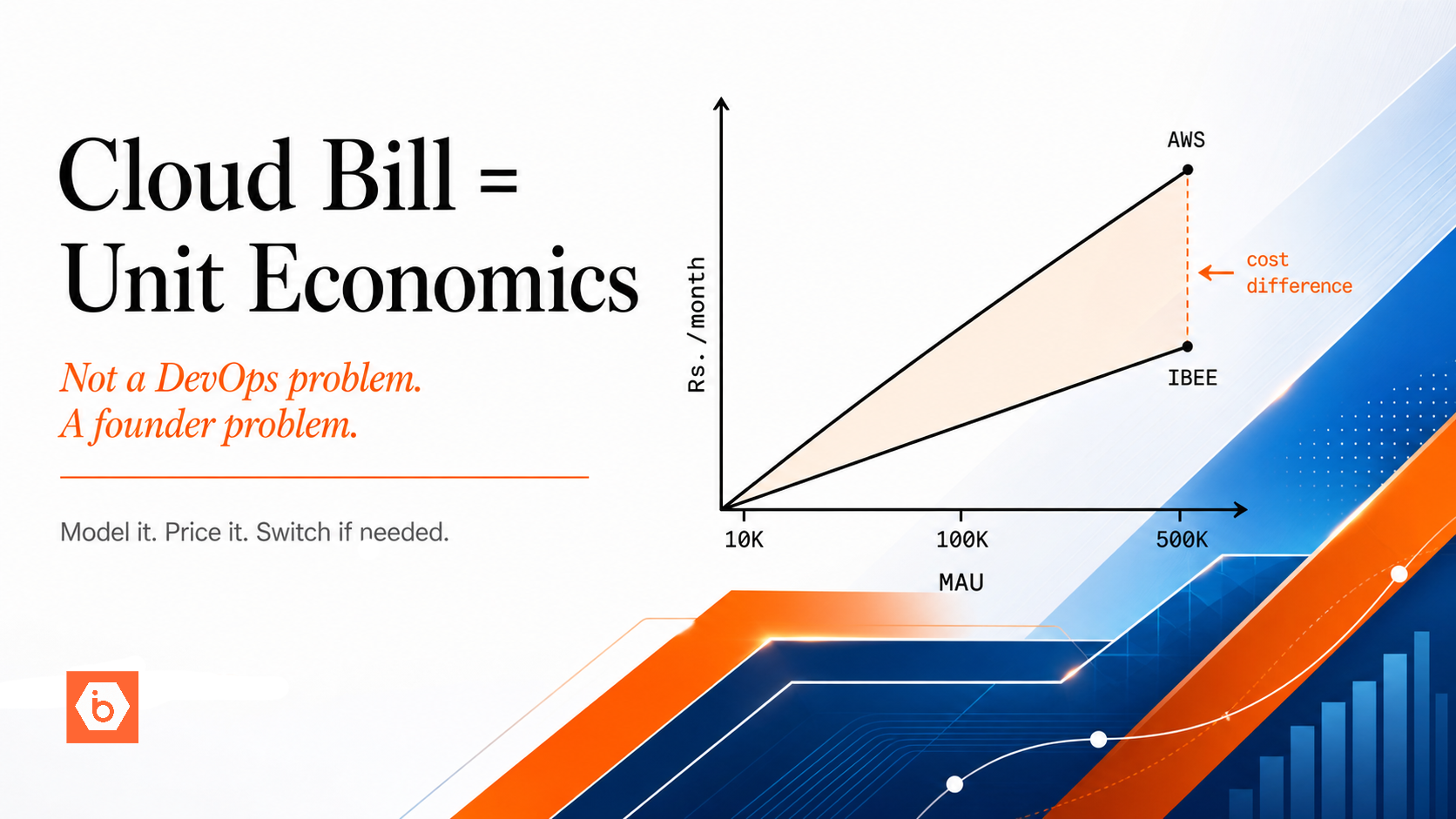

For an edtech platform with 500,000 monthly active learners, each watching an average of 4 hours of 720p content per month at an average adaptive bitrate of 1.5 Mbps, the total monthly delivery volume is approximately 1,228 TB. At AWS S3 Mumbai egress rates of Rs.10.37/GB ($0.1093/GB), serving that volume directly from storage costs approximately Rs.1,27,000,000 (~$1,338,033) per month in egress. At IBEE egress rates of Rs.2/GB ($0.021/GB), the same 1,228 TB costs approximately Rs.24,56,000 (~$25,881) per month. The Rs.1,02,54,000 (~$1,312,152) monthly difference is not a marginal optimisation. It is structural cost relief that changes the unit economics of the business. "All USD equivalents in this article use a conversion rate of Rs.94.91 per dollar as of May 2026."

But egress cost alone does not tell the full story. The architecture of how video is stored and delivered determines how much egress actually hits the storage origin and what fraction is served from CDN cache. Getting the architecture right matters as much as choosing the right provider. We have seen platforms reduce their effective origin egress by more than 90 percent simply by putting a CDN in front of correctly structured object storage.

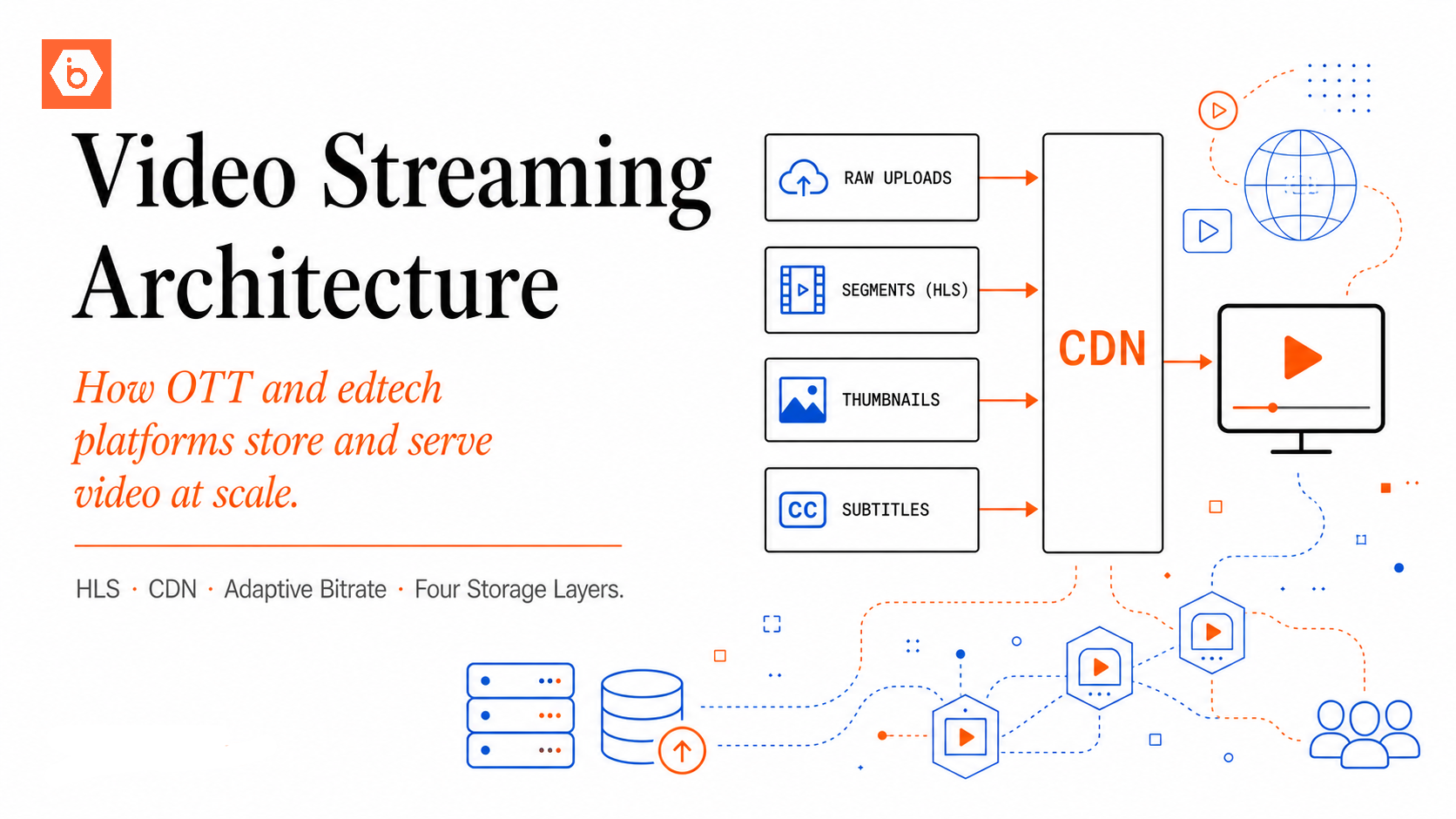

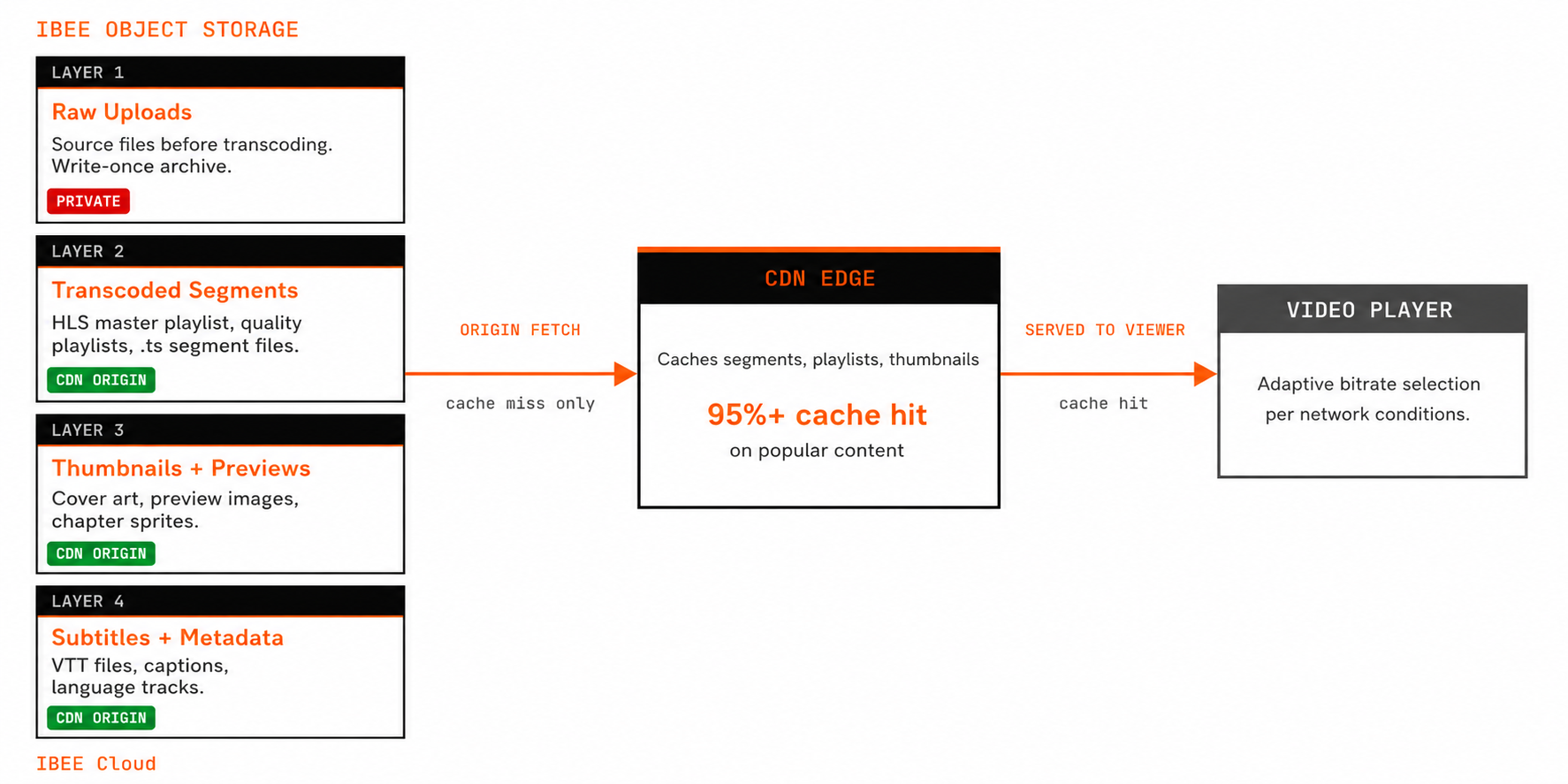

The diagram below shows the four-layer video storage architecture and the CDN delivery path.

Four storage layers, one delivery path.

The Correct Video Storage Architecture

The video storage stack has four layers, each with a distinct function.

Layer 1: Raw Upload Storage

When a creator or content team uploads a video, the raw file lands in a private bucket. This bucket is never directly accessible to viewers. Its purpose is to hold the source file during the transcoding pipeline and to preserve the original for reprocessing if encoding parameters change. Object keys encode the content identifier and original filename. Access is restricted to the transcoding service. A lifecycle policy can expire raw uploads 30 days after processed versions are confirmed, or retain them indefinitely if re-encoding from the originals is a business requirement.

Layer 2: Transcoded Segment Storage

After ingestion, the video is transcoded into multiple quality levels and segmented for adaptive bitrate streaming. The output is an HLS or DASH manifest alongside thousands of short segment files, typically 4 to 10 seconds each. This is the bucket that viewers actually consume from. Files are organised by content identifier: the master playlist, quality-level playlists for each resolution (720p, 480p, 360p), and the individual segment files for each quality level. This bucket is the CDN origin and is not directly publicly accessible to viewers.

Layer 3: Thumbnail and Preview Storage

Thumbnails, preview images, animated previews, and chapter thumbnail sprites. Typically 10 to 50 images per piece of content, served directly to the UI layer. High cache hit rates on thumbnails make CDN caching very effective here.

Layer 4: Subtitle and Metadata Storage

VTT subtitle files, closed caption files, multiple language tracks, and chapter markers, served alongside the video manifests. Separating these into their own bucket allows independent access policies and lifecycle management.

HLS Segmentation and Adaptive Bitrate

HLS is the dominant video streaming protocol for Indian audiences, supported natively by iOS, Android, most browsers, and all smart TV platforms. The video is divided into short segments, and the player automatically selects the quality level appropriate for the viewer's current network conditions.

A well-configured HLS setup for an Indian audience typically includes four quality levels. The highest tier at 1080p or 720p targets broadband and strong WiFi users. A 480p mid-tier serves mobile users on good 4G. A 360p lower tier serves Tier 2 and Tier 3 city users where 4G quality is variable. A 240p or audio-only tier serves users on 2G, 3G, or very constrained connections.

The master playlist file lists all available quality levels with their bandwidth requirements. The player reads this file, measures current download speed, and selects the appropriate quality. When conditions change, the player switches quality levels seamlessly by requesting the next segment at a different resolution.

The transcoding pipeline runs once when content is ingested. FFmpeg is the standard open-source tool for producing HLS output from a raw master file: it transcodes the source into multiple resolution variants and segments each into the short files that HLS players consume. The resulting playlists and segment files are uploaded to the processed segment bucket on IBEE, preserving the directory structure per content identifier. Full transcoding pipeline configuration examples for IBEE are available at ibee.ai/docs.

CDN Integration for Video Delivery

Object storage is the origin. A CDN is mandatory for video delivery at scale.

Without a CDN, every segment request from every viewer hits the origin bucket directly. For 10,000 concurrent viewers of a popular episode, every 6-second segment generates 10,000 GET requests to the origin.

With a CDN, the first viewer's request for a segment fetches it from the origin and caches it at the CDN edge. Every subsequent viewer's request for the same segment is served from cache without an origin request. For popular content, CDN cache hit rates above 95% are achievable, meaning the origin sees only one request per segment per CDN edge node rather than one per viewer.

Configure the CDN origin to point at IBEE's endpoint for the processed segment bucket. HLS manifests should have short cache durations, 30 to 60 seconds for live streams and 1 to 24 hours for on-demand content, because they reference the current segment list. Segment files should have long cache durations of 7 days or more because they are immutable once written and never change after publication.

With CDN caching in place, the effective origin egress for popular content is a small fraction of total viewer delivery volume. IBEE's Rs.2/GB ($0.021/GB) origin egress rate applies to CDN cache misses, not to the total viewer traffic volume.

Token-Based Access Control for Premium Content

For paid content, video segments must not be publicly accessible. A viewer who obtains a manifest URL should not be able to share it to let non-subscribers watch.

The standard approach is time-limited signed URLs. The video player requests a signed manifest URL from your API. The API verifies the viewer's subscription status, generates a presigned URL for the manifest that expires in 4 to 8 hours, and returns it to the player. The player uses the signed URL to fetch the manifest, which in turn references signed URLs for each quality-level playlist and segment file.

IBEE's full S3 API compatibility includes presigned URL generation. Any S3-compatible SDK generates presigned GET URLs valid for a specified duration, with no additional configuration required.

For live streaming with DRM requirements, the architecture extends to include a DRM key server, such as Widevine, FairPlay, or PlayReady, which issues decryption keys only to authenticated sessions. The encrypted segments stored on IBEE are useless without the keys, providing content protection even if segment URLs are shared.

Egress Cost Modelling for Video Platforms

Model your monthly delivery volume before choosing a provider. The formula for total CDN egress in GB is: monthly active users × average watch time per user in hours × average bitrate in Mbps × 3,600,000,000 ÷ 8 ÷ 1,073,741,824. A simpler equivalent: multiply users × hours × Mbps by 0.419 to get GB.

For 200,000 MAU watching 3 hours per month at an average adaptive bitrate of 1.5 Mbps: 200,000 × 3 × 1.5 × 0.419 = approximately 377,100 GB, or about 368 TB of total CDN-served traffic per month. With a 95 percent CDN cache hit rate, the storage origin receives approximately 18,855 GB, or about 18.4 TB, of origin-fetch requests per month. At IBEE's Rs.2/GB ($0.021/GB), origin egress costs approximately Rs.37,700 (~$397) per month. At AWS S3 Mumbai's Rs.10.37/GB ($0.1093/GB), the same origin egress costs approximately Rs.1,95,509 (~$2,060) per month.

The dominant cost variable for video platforms is CDN egress from the CDN provider to viewers, not storage origin egress from S3 or IBEE to the CDN. CDN egress is charged by the CDN provider and typically exceeds storage origin egress by 10 to 20 times at good cache hit rates. The storage provider's egress rate determines origin egress cost, which is the residual traffic that bypasses CDN cache. Choosing IBEE over AWS S3 Mumbai reduces origin egress cost by 5.2x on that residual volume, and that saving scales directly with user growth and catalogue size.