Why Moderation Architecture Starts at the Storage Layer

Content moderation is often thought of as a classification problem: does this image contain prohibited content? But before classification can happen, the uploaded content must be stored somewhere that prevents it from being served to other users before review, preserves it for legal hold even after deletion, supports the complete appeal and reinstatement workflow, and provides an audit trail for platform compliance obligations.

The storage architecture is the foundation of the moderation pipeline. Getting it wrong means either content is exposed before moderation, which creates legal and reputational risk, or approved content is lost during status transitions, which creates product failures. This guide covers the correct design.

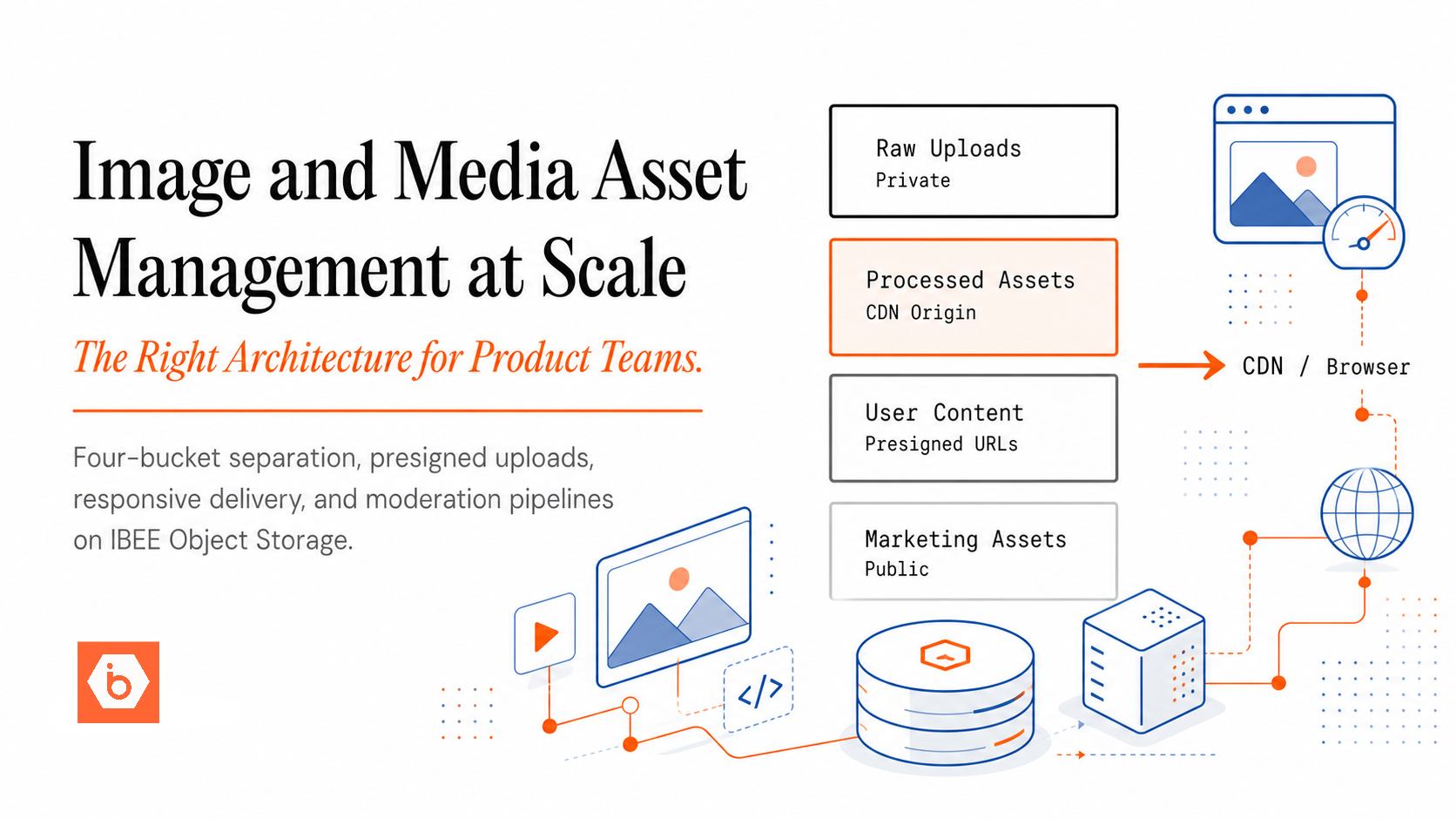

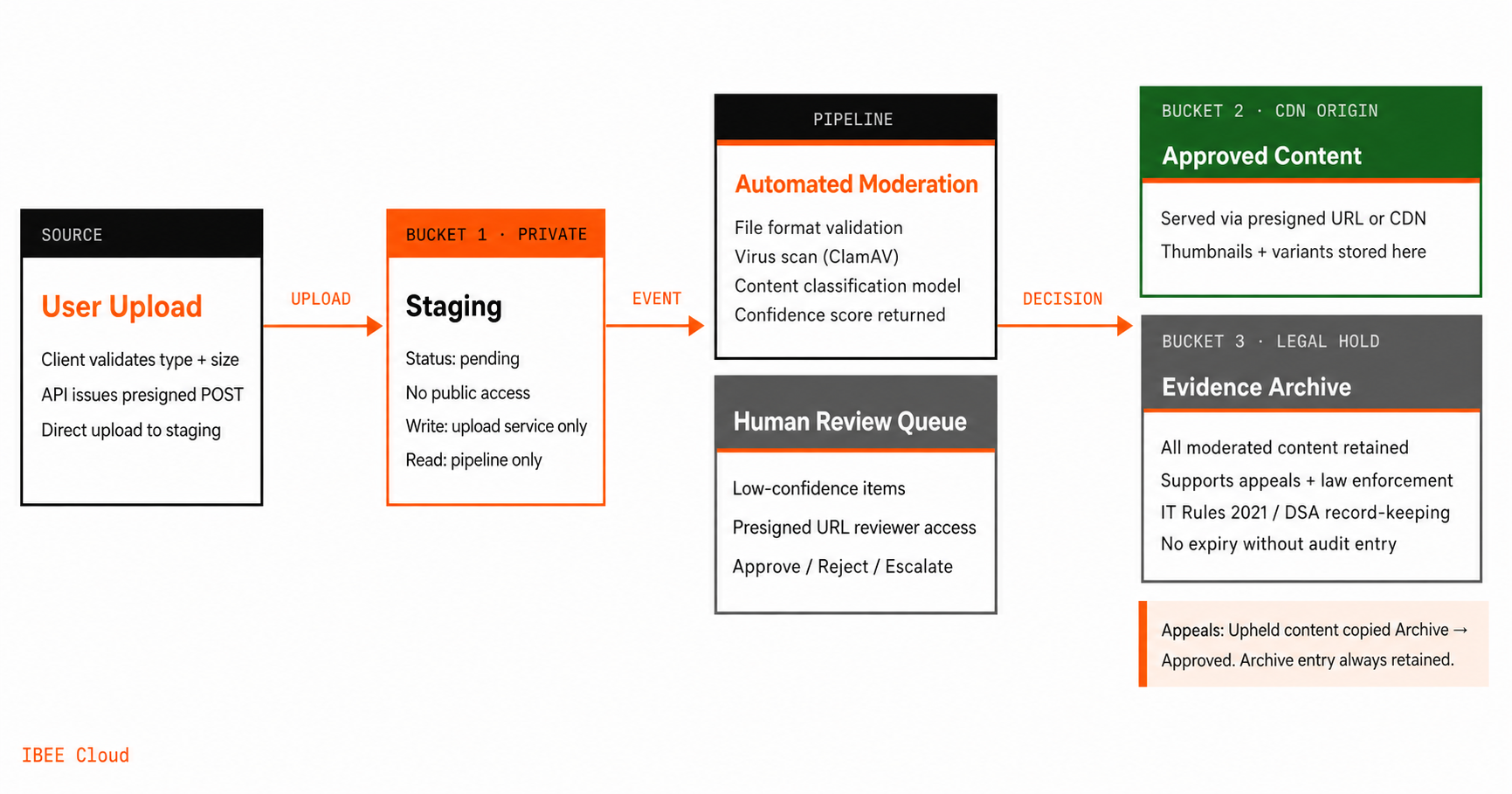

The Three-Bucket Moderation Architecture

The diagram below shows how the three buckets relate to each other and to the moderation pipeline that moves content between them.

Every upload lands in staging first. Nothing reaches users until moderation makes a decision. Nothing is deleted without an archive record.

Bucket 1: Upload Staging (Private)

Every user upload lands here first. This bucket is entirely private, meaning no content in it is ever served to any other user. The upload service has write access. The moderation pipeline has read access. No other service has any access.

Content remains in the staging bucket until the moderation pipeline makes a final decision. For automated moderation, this may take seconds. For content that requires human review, it may take hours or days.

Object tagging tracks moderation status within this bucket. On upload, each object receives a pending moderation status tag. The moderation pipeline then updates that tag to approved, rejected, or flagged for human review depending on the outcome.

Bucket 2: Approved Content (Private Origin, CDN-Served)

Content that has passed moderation, either automatically or after human review, is copied to this bucket. The approved bucket is the CDN origin: presigned URLs or public CDN paths serve content to users from here.

Only content in the approved bucket is ever served to users. The copy from staging to approved is an atomic operation, meaning the content is either in the approved bucket and serveable, or it is not. There is no intermediate state where partially-approved content could be served. We have seen this gap appear most often when teams build status checks on the write path but not the read path, leaving rejected content accessible to anyone who had already received a URL.

Bucket 3: Evidence Archive (Private, Legal Hold)

All moderated content, whether the outcome was approval or rejection, is preserved in the evidence archive. This includes rejected content that was never served to any user. The evidence archive supports legal holds for content involved in law enforcement requests, appeal processing for content that a user disputes, and platform compliance audits demonstrating that prohibited content was handled correctly.

The evidence archive has no lifecycle expiry by default. Deletion requires an explicit action with a corresponding audit log entry. For platforms subject to IT Rules 2021 (India's intermediary guidelines), the EU Digital Services Act, or equivalent national frameworks requiring record-keeping of moderation decisions, the evidence archive is the technical implementation of that obligation.

The Upload and Moderation Flow

Step 1: Client-Side Validation Before Upload

Before accepting an upload, validate file type and size on the client. Return an error for obviously invalid uploads, such as a non-image MIME type submitted to a photo upload field, or a file exceeding the permitted size, without accepting the file at all. This reduces processing load and prevents common abuse patterns before any bytes are transferred.

Step 2: Presigned POST to Staging Bucket

The API generates a presigned POST URL for the staging bucket and returns it to the client. The client uploads directly to the staging bucket using that URL. The API records the pending upload in the database with a pending moderation status. Full presigned POST configuration for IBEE is available at ibee.ai/docs.

Step 3: Event-Driven Moderation Trigger

The staging bucket emits an object creation event when the upload completes. A moderation worker consumes the event and begins the automated pipeline. The pipeline first validates the file format, confirming the file is genuinely what its MIME type claims and not a disguised executable. It then passes the file through a virus scanner such as ClamAV. Finally, it runs the image or video through an automated content classification model, either self-hosted or a third-party classification API, and returns a decision with a confidence score.

Step 4: Automated Decision

If the automated pipeline returns a high-confidence clean result, the content is copied to the approved bucket, the database record is updated to approved status, and derivative assets such as thumbnails and resized variants are generated from the staging copy and written to the approved bucket.

If the automated pipeline returns a high-confidence prohibited result, the database record is updated to rejected status, the file is copied to the evidence archive, and the user is notified with the appropriate rejection reason.

If the automated pipeline returns a low-confidence result or the content falls into a category requiring human review, the database record is updated to a human review status and the item is added to the human review queue.

Step 5: Human Review Queue

The human review queue is a database-backed list of items awaiting reviewer attention. Reviewers access a review interface that fetches short-lived presigned URLs for items in the staging bucket, so reviewers can view content without it ever being publicly accessible. The interface also displays the automated classification results and confidence scores alongside the content, and provides approve, reject, and escalate actions. Human decisions trigger the same copy-to-approved or copy-to-archive flow as automated decisions.

Step 6: Appeals Workflow

When a user appeals a rejection, the appeal record is linked to the evidence archive entry for that content. The appeal reviewer retrieves the original file from the evidence archive rather than from staging, which may have been cleaned up by that point, and reviews it alongside any additional context the user provided. If the appeal is upheld, the content is copied from the archive to the approved bucket and made accessible. The archive entry is retained regardless of the appeal outcome.

Legal Hold and Evidence Preservation

Platforms that receive law enforcement or court requests for user-generated content must be able to produce the requested content reliably. The evidence archive is designed for this.

When a legal hold is placed on a specific user's content, all objects in the evidence archive associated with that user receive a legal hold flag. Lifecycle policies must be configured to skip expiry for objects carrying this flag. The hold remains in place until explicitly released with a corresponding audit entry.

For content involved in reported incidents such as harassment, threats, or child sexual abuse material, the evidence is typically preserved for the duration of any investigation plus a defined statutory retention period. This retention is managed through the same object tagging and lifecycle policy mechanism. Under IT Rules 2021, significant social media intermediaries in India are required to retain certain categories of content for defined periods even after removal. The EU Digital Services Act imposes equivalent record-keeping requirements on platforms designated as Very Large Online Platforms. The evidence archive with legal hold capability is the technical implementation of both.

IBEE for UGC Moderation Infrastructure

The moderation architecture requires private bucket access for staging and evidence, CDN delivery for approved content, and India-sovereign storage for evidence records. IBEE's S3-compatible API, presigned URL support, and object tagging capability support all three.

For Indian platforms with obligations under IT Rules 2021 or sector-specific regulations, India-sovereign storage for the evidence archive means legal hold requests and law enforcement responses involve Indian entities under Indian legal process. A platform storing its evidence archive on a US-incorporated cloud provider creates a cross-border data request problem for any law enforcement response that would otherwise run entirely through Indian institutions. IBEE's Indian entity structure, 180-day audit logging within Indian jurisdiction, and AES-256 encryption at rest as a default close that gap at the infrastructure level.