How Live Streaming Uses Object Storage

Live streaming and VOD use object storage differently. In VOD, content is transcoded once and stored permanently. The storage layer is read-heavy: many concurrent reads of stable files.

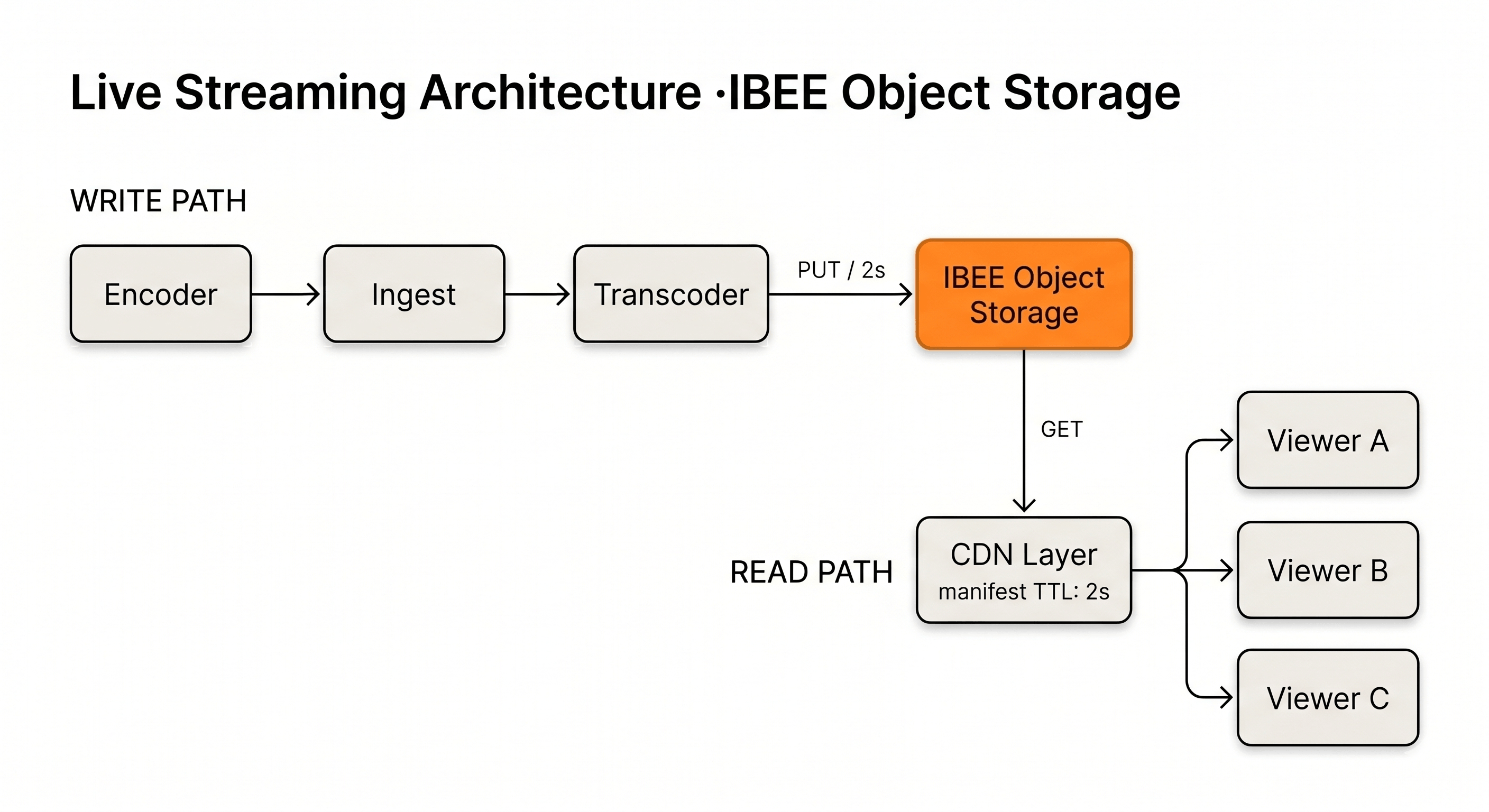

In live streaming, the encoder writes new HLS segments to object storage every 2 to 6 seconds throughout the broadcast. The manifest file is rewritten on every segment. It must always reflect the last few segments available for viewing. Viewers' players poll the manifest every few seconds to discover new segments and request them.

Object storage in a live stream is a high-write, low-latency, short-retention store. Segments are written continuously, served immediately through the CDN, and can be expired after 30 to 60 minutes for pure live streams, or retained for VOD playback in live-to-VOD workflows.

IBEE's S3-compatible API handles this write pattern well. Segment files are small, typically 500 KB to 3 MB each, and uploaded at high frequency. The per-request API cost at Rs.420/million Class A requests is negligible even at thousands of segments per hour. We have seen teams underestimate write frequency and overprovision transcoding before realising the storage costs are the smaller problem entirely.

The Live Streaming Stack

Ingest Layer: RTMP/SRT Receiver

The broadcaster sends a video stream to an ingest point using RTMP or SRT protocol. The ingest server receives this stream and passes it to the transcoding layer. The broadcaster could be a live encoder like OBS, a hardware encoder, or a mobile streaming app.

NGINX with the RTMP module is a common open-source ingest server. Commercial live streaming platforms such as Wowza and Ant Media Server provide managed ingest with built-in transcoding. The ingest server runs on a compute instance in India, close to the broadcaster, to minimise upload latency.

Transcoding Layer: Live Encoder

The transcoding layer converts the incoming stream to HLS format in real time. It segments the stream into 2 to 6 second chunks, encodes at multiple quality levels including 1080p, 720p, 480p, and 360p, and writes segments and manifests to object storage.

FFmpeg can handle live transcoding for low-scale streams. For high-scale or multi-stream platforms, dedicated transcoding services backed by cloud or on-premise GPUs are required.

The transcoding service writes to IBEE over the S3 API. Each segment upload is a PUT operation. Each manifest update is a PUT that overwrites the previous manifest file with the updated segment list.

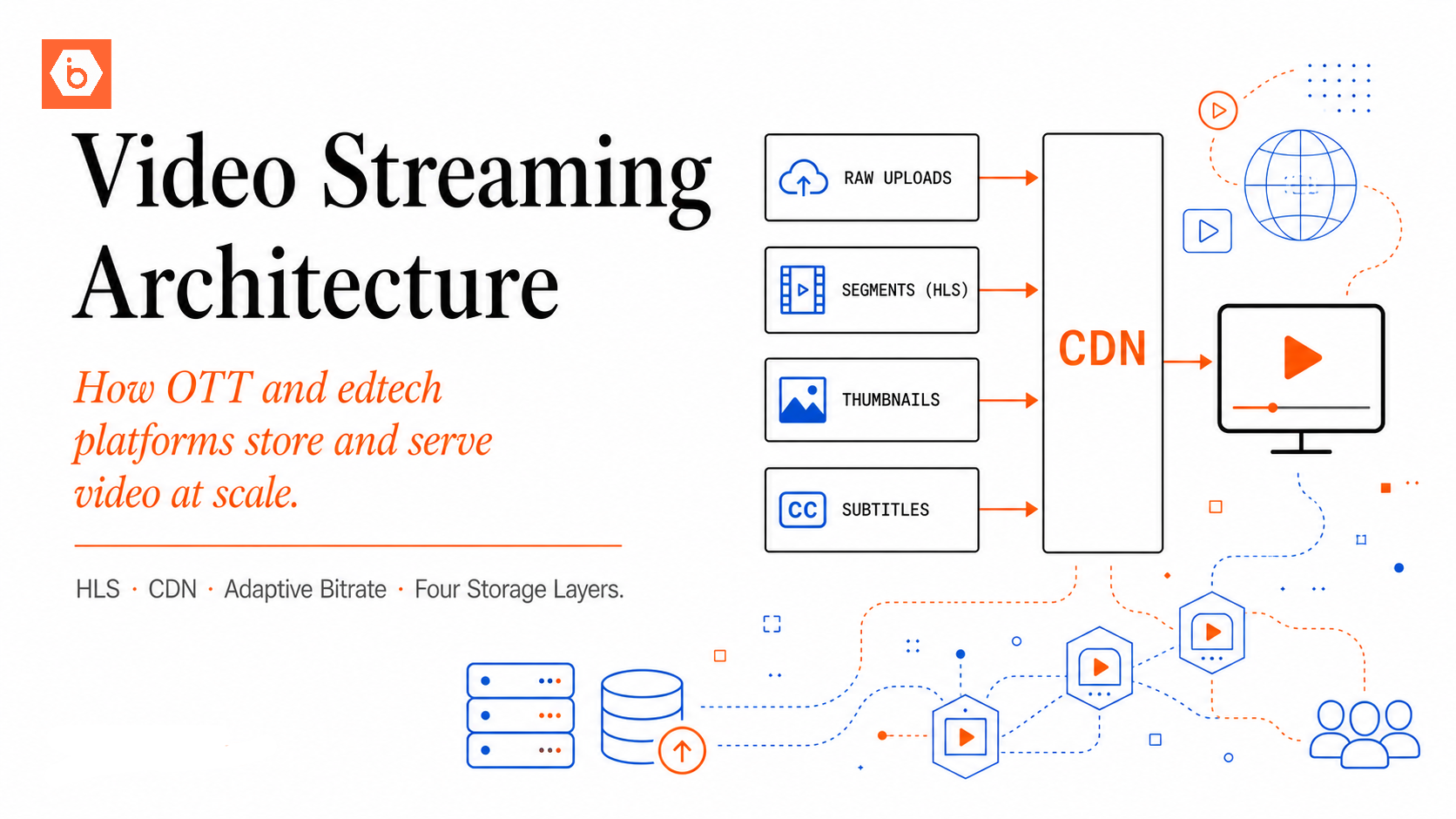

Object Storage Layer: IBEE

The live segment store holds segment files and manifests. Two access patterns run simultaneously: the transcoder writes new segments every few seconds, and the CDN reads segments to serve to viewers.

Bucket configuration for live streaming: public read access on the live-content prefix to allow CDN fetches, versioning disabled because manifests are overwritten rather than versioned, and a lifecycle rule that expires segments older than 2 hours for pure-live streams.

CDN Layer: Edge Delivery

The CDN sits between IBEE and viewers. Viewers' players request the manifest and segments from CDN edge nodes, not directly from IBEE. The CDN fetches from IBEE only on cache miss.

For live streaming, manifest cache TTL must be short: typically 2 to 4 seconds, or equal to the segment duration. Manifests change on every new segment. A viewer whose player receives an old cached manifest will fall behind the live edge. Segment files, once written, are immutable and can be cached for their full duration without concern.

Write path from transcoder to IBEE, read path from CDN edge to viewer

Segment Storage Key Structure

A well-designed live stream key structure separates the master manifest, quality-level manifests, and individual segment files into a predictable hierarchy. The master manifest is written once at stream start. Quality manifests are overwritten on every new segment. Segment files accumulate until the lifecycle rule expires them.

For live-to-VOD workflows where the stream is available for replay after the broadcast, do not expire segments. The complete segment sequence forms the VOD asset. After the stream ends, generate a final master manifest pointing to the full segment list and write it to the VOD bucket.

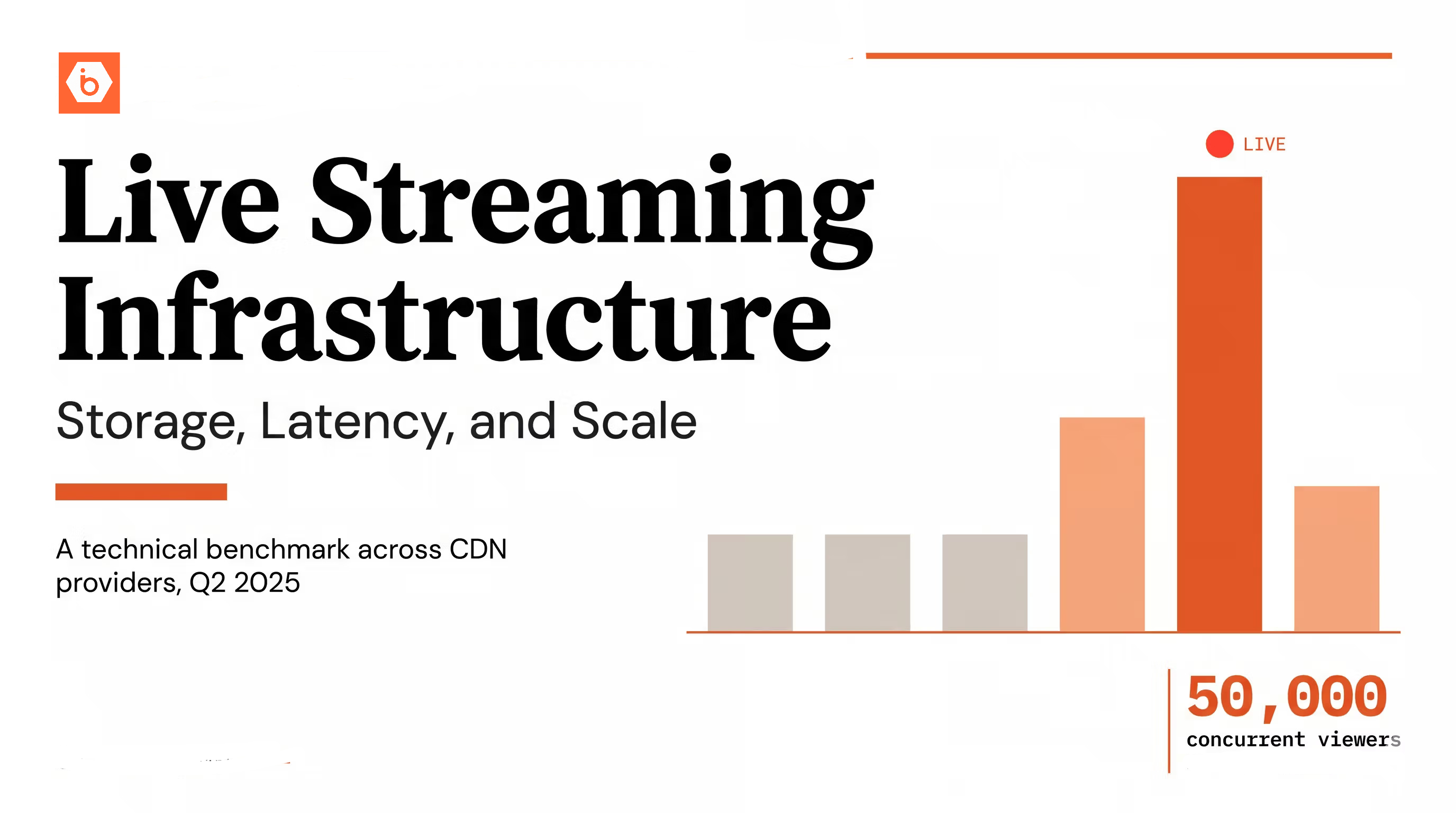

Handling Concurrent Viewers at Scale

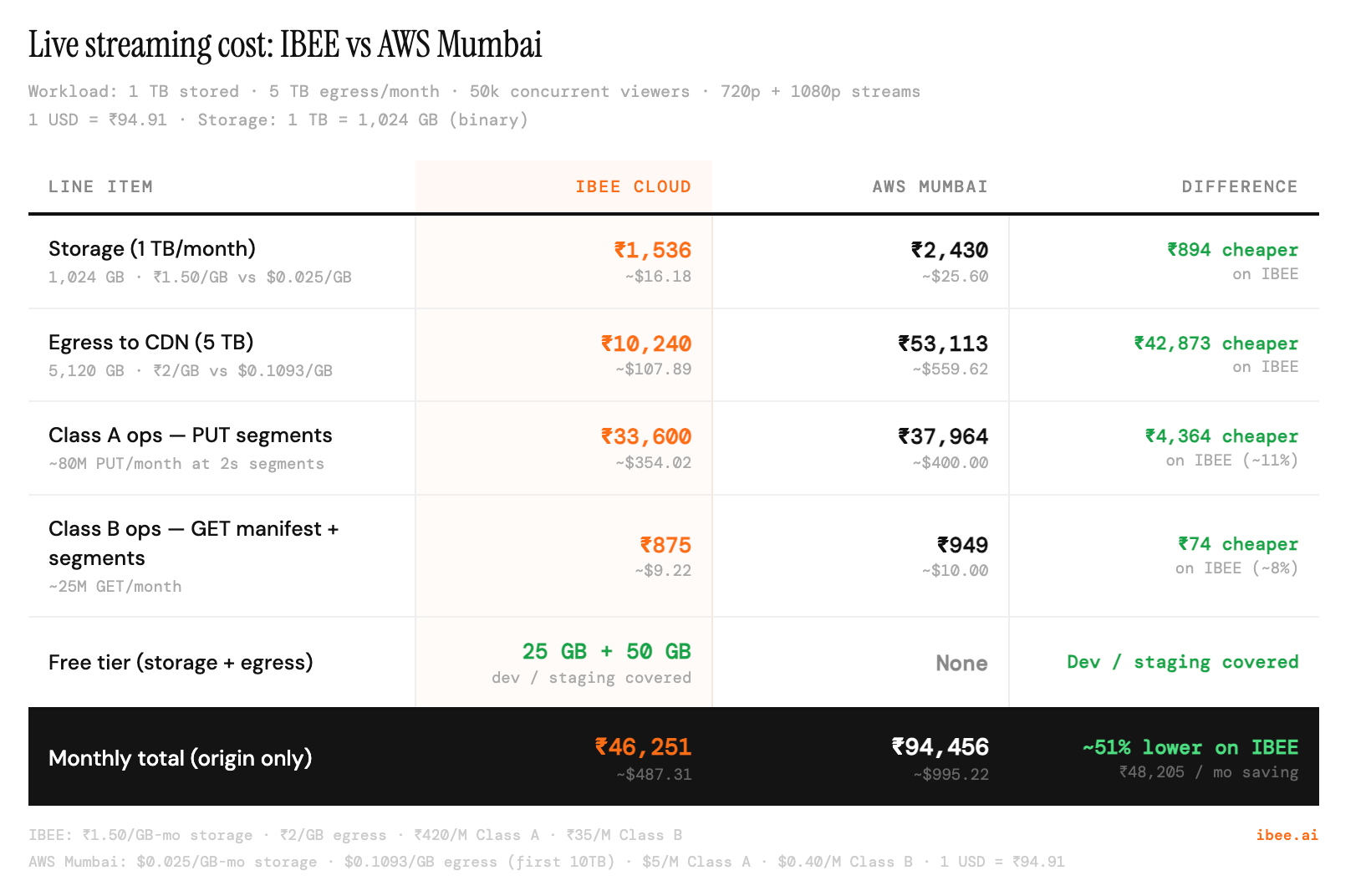

A live event with 50,000 concurrent viewers all requesting the manifest every 4 seconds generates 12,500 manifest requests per second. Without a CDN, this is 12,500 GET requests per second against the IBEE origin. That is technically feasible but unnecessary, expensive in API request costs, and fragile under burst traffic.

With a CDN and a 4-second manifest cache TTL, the origin sees one manifest request per CDN edge node per 4 seconds: perhaps 100 requests per second across a typical CDN's Indian edge network, regardless of how many viewers are behind each edge. The CDN absorbs the fan-out.

Segment requests follow the same pattern. The first viewer at each CDN edge to request a given segment fetches from origin. Every subsequent viewer at that edge within the segment's cache TTL is served from cache. For popular live streams, segment cache hit rates at major CDN edges reach 90 to 99 percent.

The practical implication is that IBEE origin egress for a 50,000-concurrent-viewer live stream is a fraction of the total egress volume. Most of the traffic is served from CDN cache. Origin costs scale with CDN edge count, not viewer count. We speak to a lot of teams running sports events who assume origin egress will be their largest cost. After the first event with a properly configured CDN, that assumption changes quickly.

DVR Window and Seek-Back Capability

Many live streaming platforms offer a DVR window: the ability for viewers to pause a live stream and seek back up to 30 or 60 minutes. This is implemented at the segment storage layer.

For a 30-minute DVR window, the manifest file lists all segments from the last 30 minutes. At 4-second segments, this is 450 segments. As new segments are added, old segments past the window are removed from the manifest listing. The segment files themselves remain in storage until the lifecycle policy expires them.

For viewers who joined 20 minutes into a stream and want to seek back to the beginning of the DVR window, the manifest they request contains enough historical segments to support 30 minutes of seek-back. Their player requests the older segments directly by key. Those segments, having been cached by the CDN when original viewers watched them, are typically still in CDN cache.

IBEE vs AWS Mumbai: storage, egress, and API costs for a typical live streaming workload

Stream Recording and Archive

After a live broadcast ends, the complete stream is available as a sequence of segments in IBEE. Post-processing converts this into a standard VOD asset. The steps are: concatenate all segment files in order into a complete MP4, or maintain the segment structure and write a final VOD manifest, then run the VOD thumbnail and preview generation pipeline, and finally move the processed VOD to the main VOD bucket with a permanent lifecycle and no expiry.

The live segment bucket can then be cleaned up. A lifecycle policy that deletes segments older than 24 hours after the stream ends keeps storage costs proportionate to active and recent content rather than accumulating indefinitely.