Object Storage Is More Than a File Bucket

Every engineering team uses object storage for something. Most use it as a place to put files the application generates or that users upload. The bucket is there, the files go in, the application reads them back out. Simple enough at the start.

The teams that build products that scale well, that stay cost-efficient as user counts grow and maintain good performance as data volumes increase, treat object storage as an architectural primitive with a set of patterns attached to it. The bucket is not just a file cabinet. It is a load distribution mechanism, a security boundary, a processing trigger, and a multitenant isolation layer.

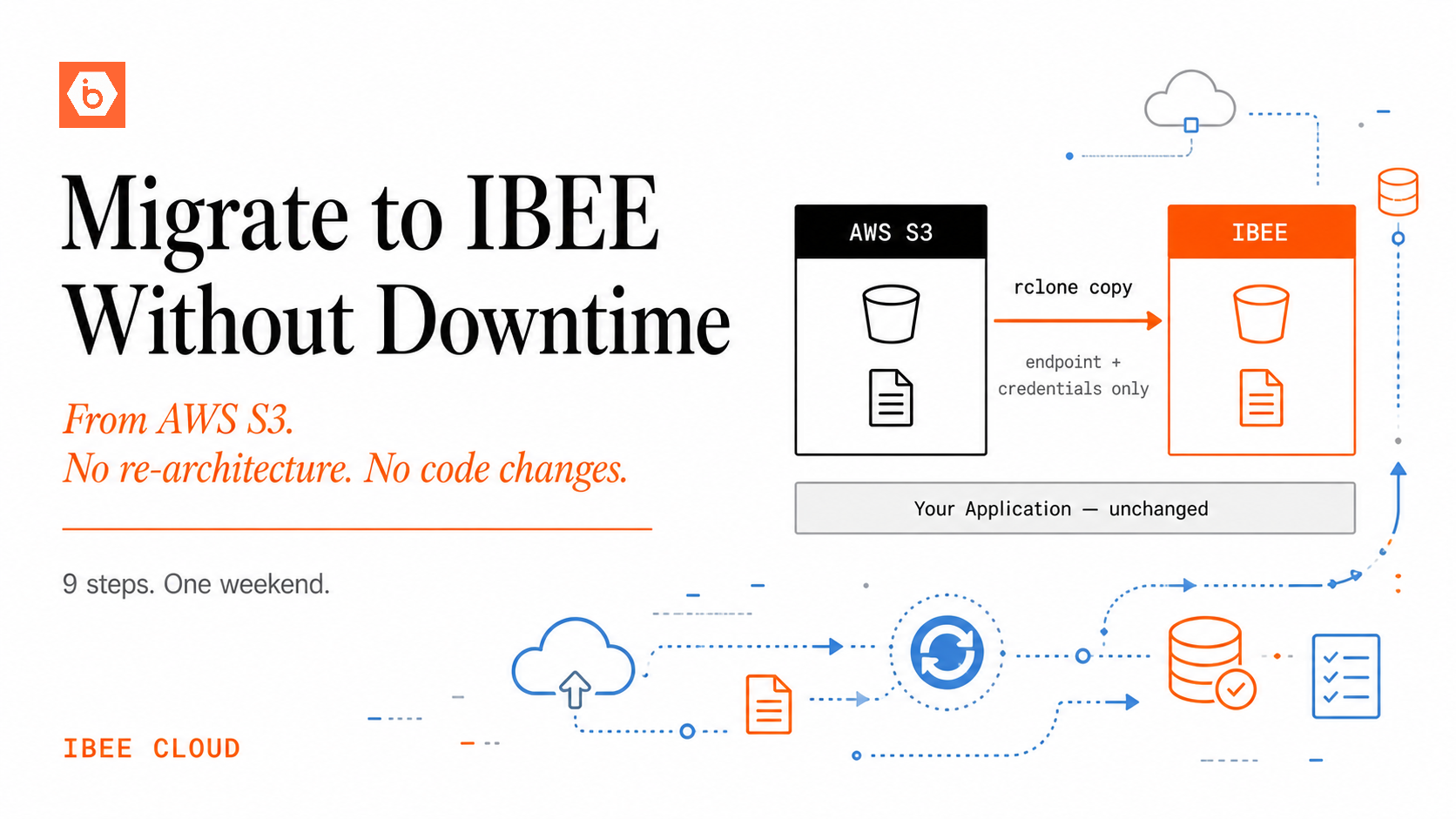

The five patterns below separate good storage architecture from a naive file bucket approach. All five work on any S3-compatible storage provider, including IBEE. None of them require switching providers or changing application frameworks — they are patterns, not products.

Pattern 1: Presigned URLs for Secure Time-Limited Access

The core problem this pattern solves is access control without proxying. The application stores files in a private bucket. Users need to access specific files, but making the bucket public exposes everything, and proxying every file download through the application server wastes compute and creates a bottleneck.

The solution is to generate a presigned URL server-side for each file access request. A presigned URL is a time-limited, cryptographically signed URL that allows the holder to access a specific object for a defined window, typically 5 to 60 minutes. The URL is generated by the server using storage credentials, passed to the client, and used by the client to fetch the file directly from storage. The storage layer handles delivery. The application server handles authorisation logic. Neither steps on the other's role.

Proxying file downloads through an application server wastes compute, adds latency, and caps throughput at the server's bandwidth limit. Every megabyte of user data flowing through the server is infrastructure cost that does not need to exist. Presigned URLs eliminate that cost while retaining full access control. They are supported identically across all S3-compatible providers including IBEE, using the standard getSignedUrl SDK method.

One detail worth getting right is expiry time. A 24-hour presigned URL for a sensitive document is a meaningful security exposure. A 5-minute presigned URL for a large video file may expire before the user finishes downloading it. Match the expiry window to the specific use case rather than defaulting to one value across all file types.

Pattern 2: Direct-to-Storage Uploads

The problem here is the double-transfer penalty. Users upload files through the application, the server receives the upload into memory or disk, and then writes to storage. For large files this is slow, resource-intensive, and means the same bytes travel twice across the network: once to the server, once to storage.

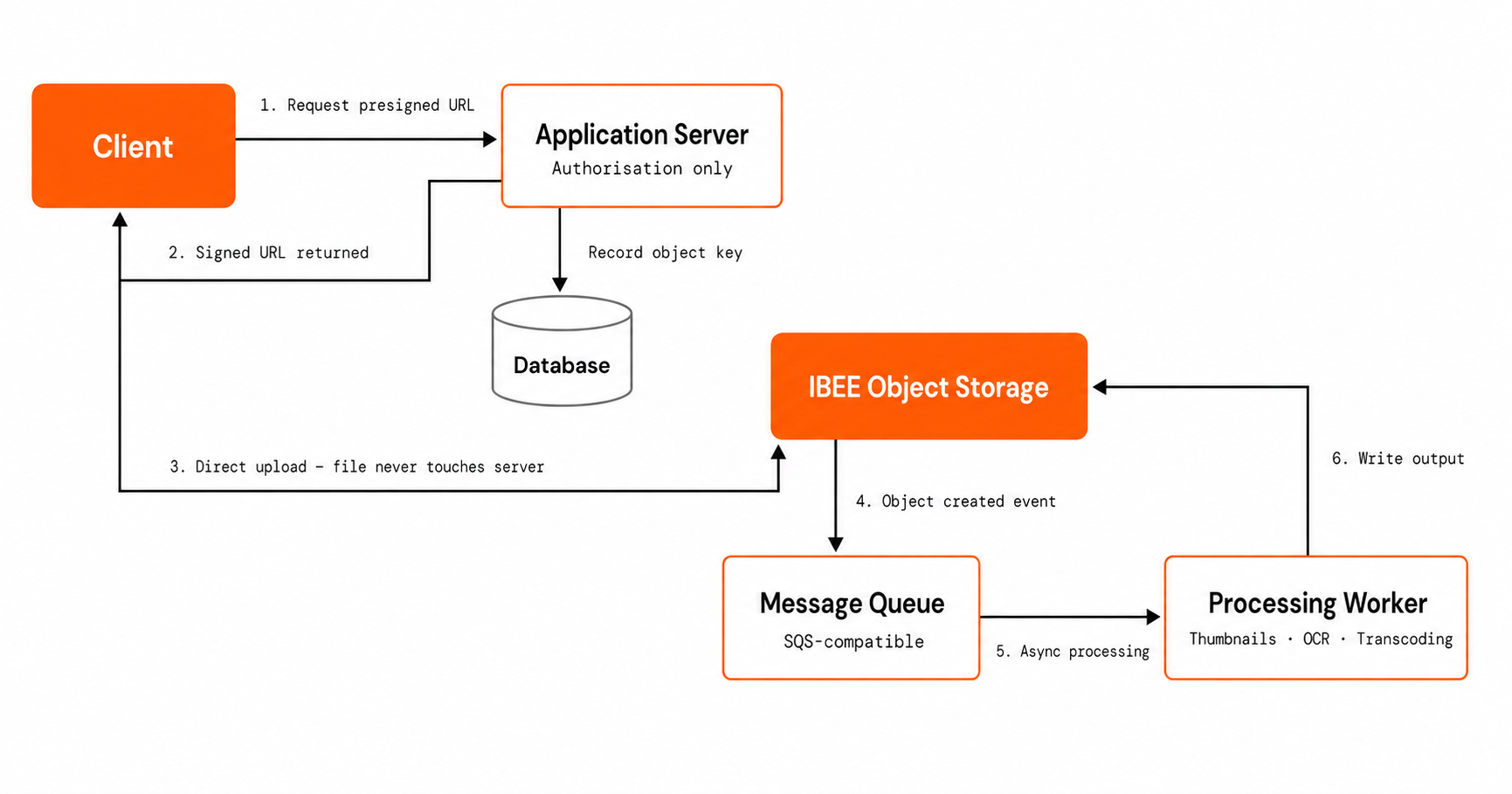

The pattern is to generate a presigned POST or PUT URL that allows the client to upload directly to the storage bucket, bypassing the server entirely. The server generates the signed upload URL, returns it to the client, and the client uploads directly to IBEE. The server receives a completion notification and records the object's location in the database. The file never touches the application server.

For an edtech platform where students upload assignments, a marketplace where sellers upload product images, or a legal platform where clients submit documents, this matters significantly. The server handles authorisation and metadata. Storage handles the bytes. For large files above 5 GB, use S3 multipart upload via presigned URLs: generate signed URLs for each part, have the client upload parts in parallel, and complete the multipart upload once all parts are confirmed. The throughput improvement on large file uploads is substantial.

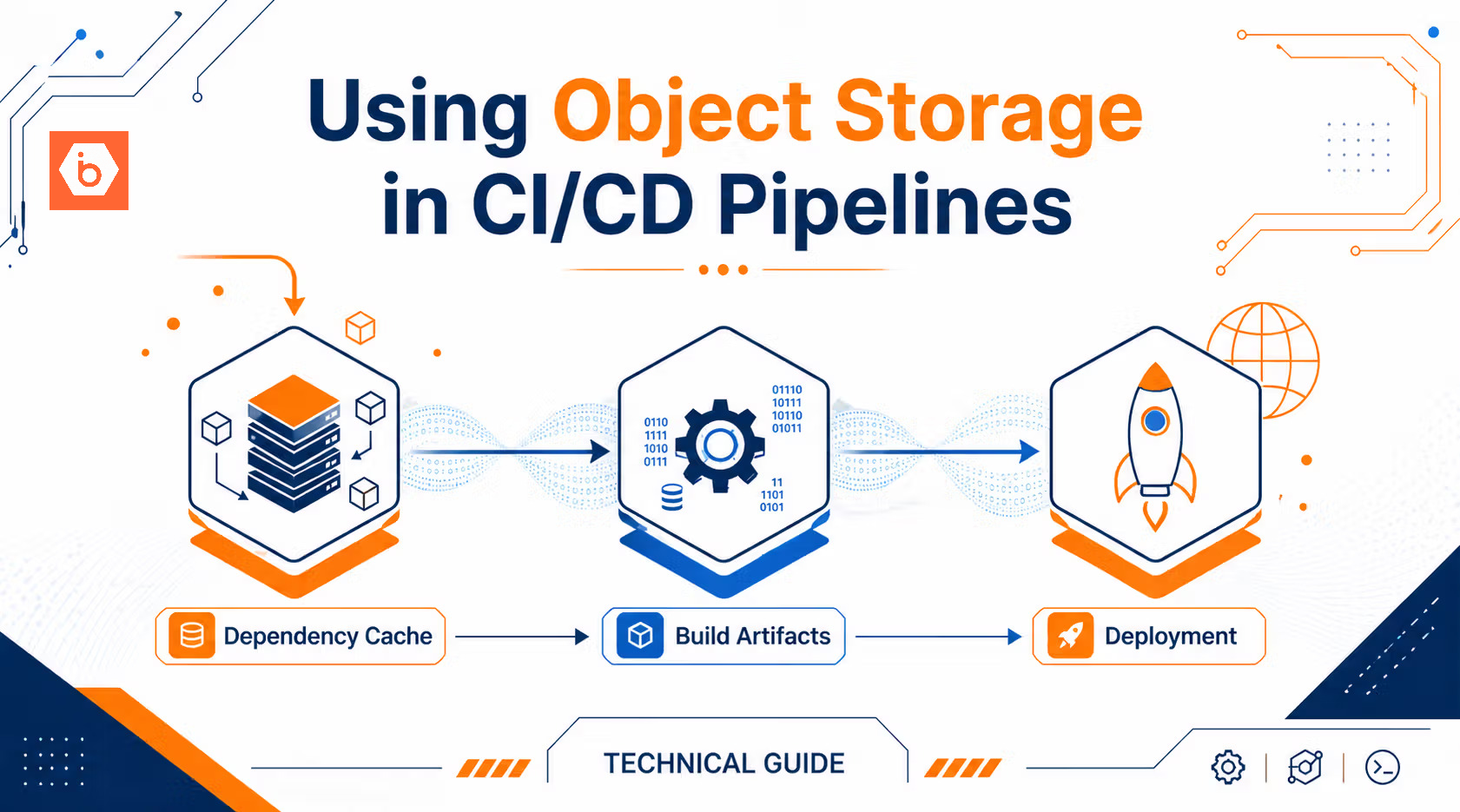

Pattern 3: Event-Driven Processing Pipelines

When a user uploads an image, thumbnails need to be generated. When a video arrives, transcoding needs to run. When a document is submitted, OCR needs to execute. The naive implementation runs this processing synchronously in the upload handler, which blocks the response, increases latency for the user, and ties processing capacity directly to upload concurrency.

The pattern is to configure object storage event notifications to trigger downstream processing automatically when new objects land in the bucket. The storage layer emits an event on object creation, which triggers a queue message, a webhook, or a serverless function that runs the processing job asynchronously. Ingestion and processing are decoupled. Each scales independently. Failed processing jobs can be retried without requiring the user to re-upload.

IBEE's S3-compatible API supports bucket event notifications. Configure notifications to publish to an SQS-compatible queue or a custom message broker, and have processing workers consume from the queue. For image thumbnails, video transcoding, document parsing, or any transformation on uploaded content, this pattern removes the synchronous processing bottleneck from the upload path entirely.

Secure Direct Uploads with Presigned URLs and Event-Driven Processing

Pattern 4: Bucket Per Tenant for Multitenant Isolation

For SaaS products storing data on behalf of multiple customer organisations, the shared bucket approach — all tenants in one bucket separated by key prefix — puts the full responsibility for isolation on the application code. A misconfiguration in the access control layer can expose one tenant's data to another. The storage layer provides no backstop.

The bucket-per-tenant pattern provisions a dedicated bucket for each tenant at signup. Each tenant's data is physically isolated at the bucket level. IAM policies grant each tenant's application credentials access only to their own bucket. A misconfiguration in one tenant's policy cannot affect another tenant's data because the bucket boundary enforces the separation regardless of what the application layer does.

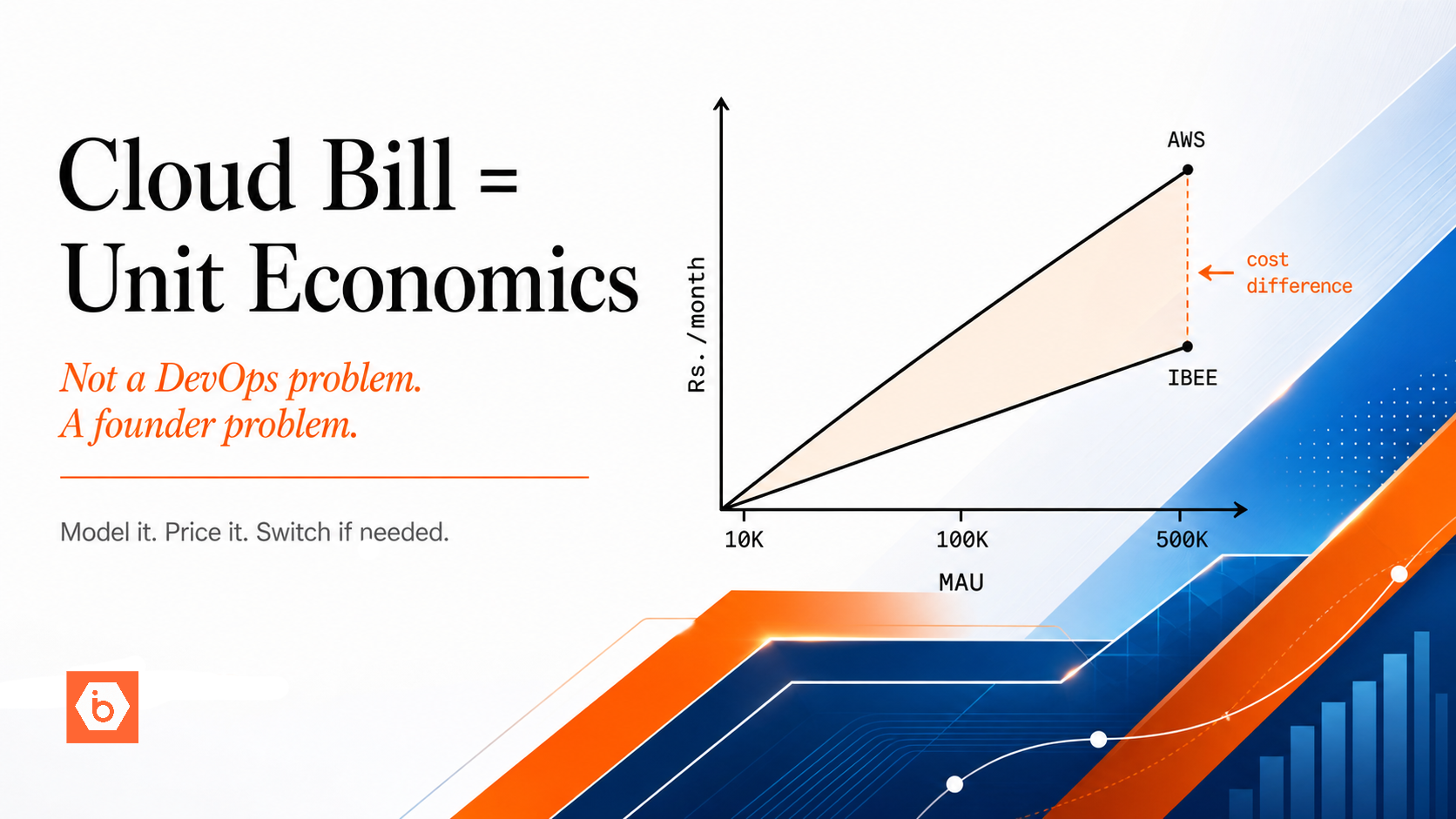

For B2B SaaS, data isolation is often a contractual requirement that enterprise procurement teams ask about directly. Beyond compliance, bucket-per-tenant makes per-customer storage billing exact and auditable, allows independent lifecycle policies per tenant, and makes offboarding clean. Deleting a customer account means deleting their bucket. At IBEE's pricing of Rs.1.50 per GB per month ($0.016/GB/month), provisioning 10,000 tenant buckets costs approximately Rs.4.20 in Class A API fees — effectively zero. The operational overhead is in automating the provisioning, not in the storage cost.

Pattern 5: Static Asset Serving Directly From Storage

Static assets — JavaScript bundles, CSS files, images, fonts — are commonly served through the web server or through a CDN that uses the web server as origin. This adds an unnecessary hop. The web server contributes nothing to static file delivery except latency and load.

The pattern is to serve static assets directly from a public S3-compatible bucket. The build pipeline uploads compiled assets to the bucket on each deployment. The CDN or application HTML references the storage URL directly. The storage layer becomes the origin for all static content, and the web server is removed from the static delivery path entirely.

This reduces infrastructure complexity, eliminates a class of server load, and often improves origin response times. For single-page applications and asset-heavy dashboards where static assets are updated frequently, it also simplifies the deployment pipeline: deploying a new frontend version is an object upload, not a server restart or cache invalidation sequence. On IBEE, configure the bucket for public read access, set appropriate cache-control headers on static asset objects, and point your CDN at the IBEE bucket as origin.

Putting the Patterns Together

These five patterns are not mutually exclusive. A well-architected product typically uses several simultaneously: presigned URLs for user file access, direct uploads to bypass the server, event-driven processing for new uploads, bucket-per-tenant for SaaS isolation, and direct static asset serving for the frontend.

Together they describe a storage architecture where the object storage layer handles its own concerns — access control, delivery, event triggering, tenant isolation — instead of routing everything through application server code. The result is a product that scales more gracefully, costs less per user as it grows, and is simpler to reason about operationally.

All five patterns work on any S3-compatible storage provider. IBEE's full S3 API compatibility means they work on IBEE without modification. For teams building products that serve users in India or across Asia, IBEE's India-resident infrastructure adds sub-5ms origin latency for regional users — so the presigned URL downloads, direct uploads, and static asset requests all travel through infrastructure that is geographically close to the end user rather than through a distant hyperscaler availability zone.