Why This Comparison Is Not What You Think It Is

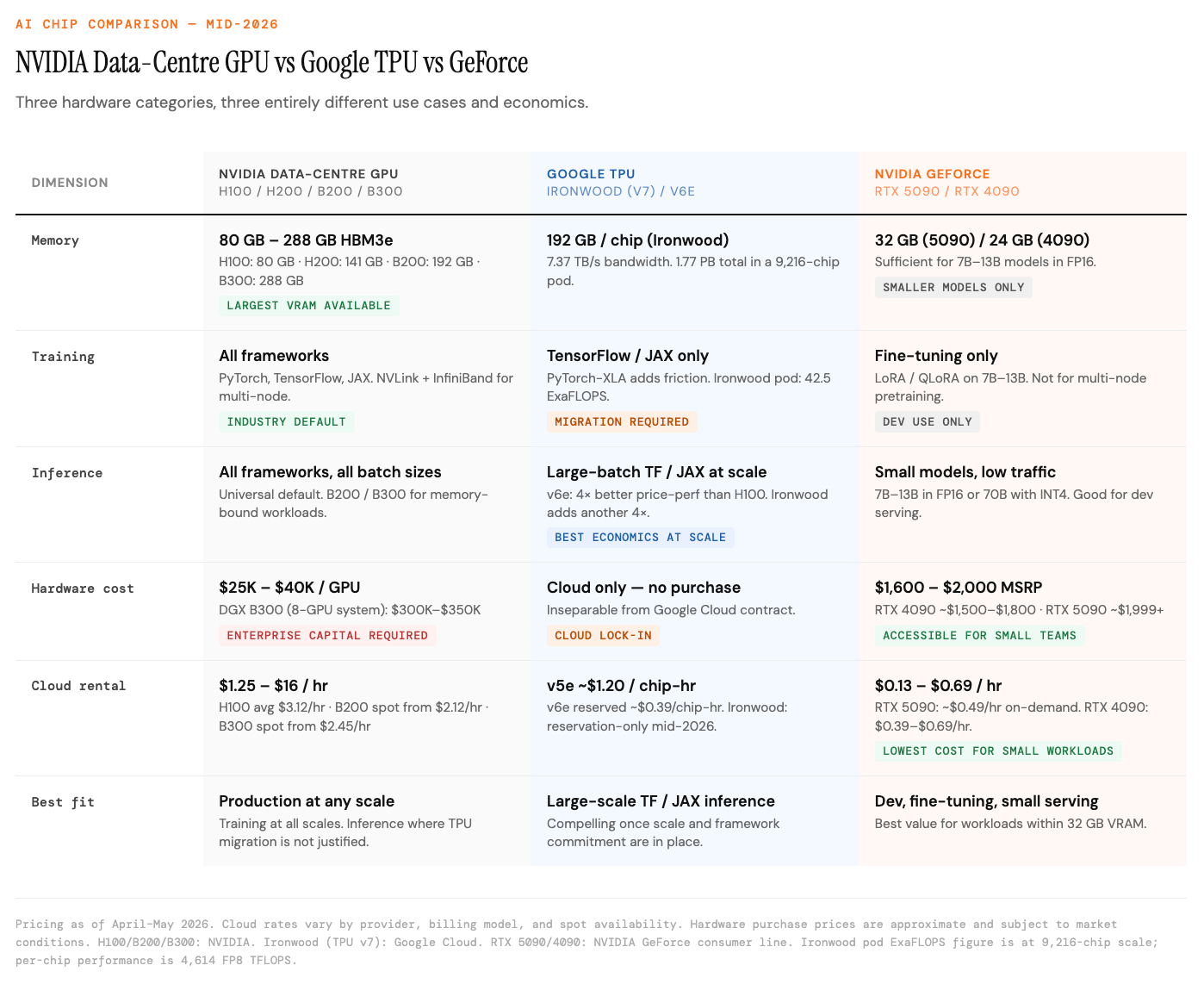

- When people compare GPU vs TPU vs GeForce, they are often conflating three hardware categories that operate in entirely different contexts, at entirely different price points, for entirely different use cases. Treating them as interchangeable options on a single spectrum produces bad decisions.

- NVIDIA's data-centre GPUs, the H100, H200, and the Blackwell generation now shipping in volume, are purpose-built enterprise accelerators designed for 24/7 data-centre operation at scale. Google's TPUs are application-specific integrated circuits available only through Google Cloud, designed to maximise efficiency for TensorFlow and JAX workloads. NVIDIA GeForce cards, primarily the RTX 4090 and RTX 5090, are consumer gaming GPUs that the AI community has adapted for research, prototyping, and small-scale inference at a fraction of the enterprise cost.

- The right question is not which is best. The right question is which category fits the specific workload, team scale, and budget. This article answers that question with current pricing and performance numbers as of mid-2026.

Part 1 — The Architecture Behind Each Chip

NVIDIA Data-Centre GPUs: The General-Purpose Workhorse

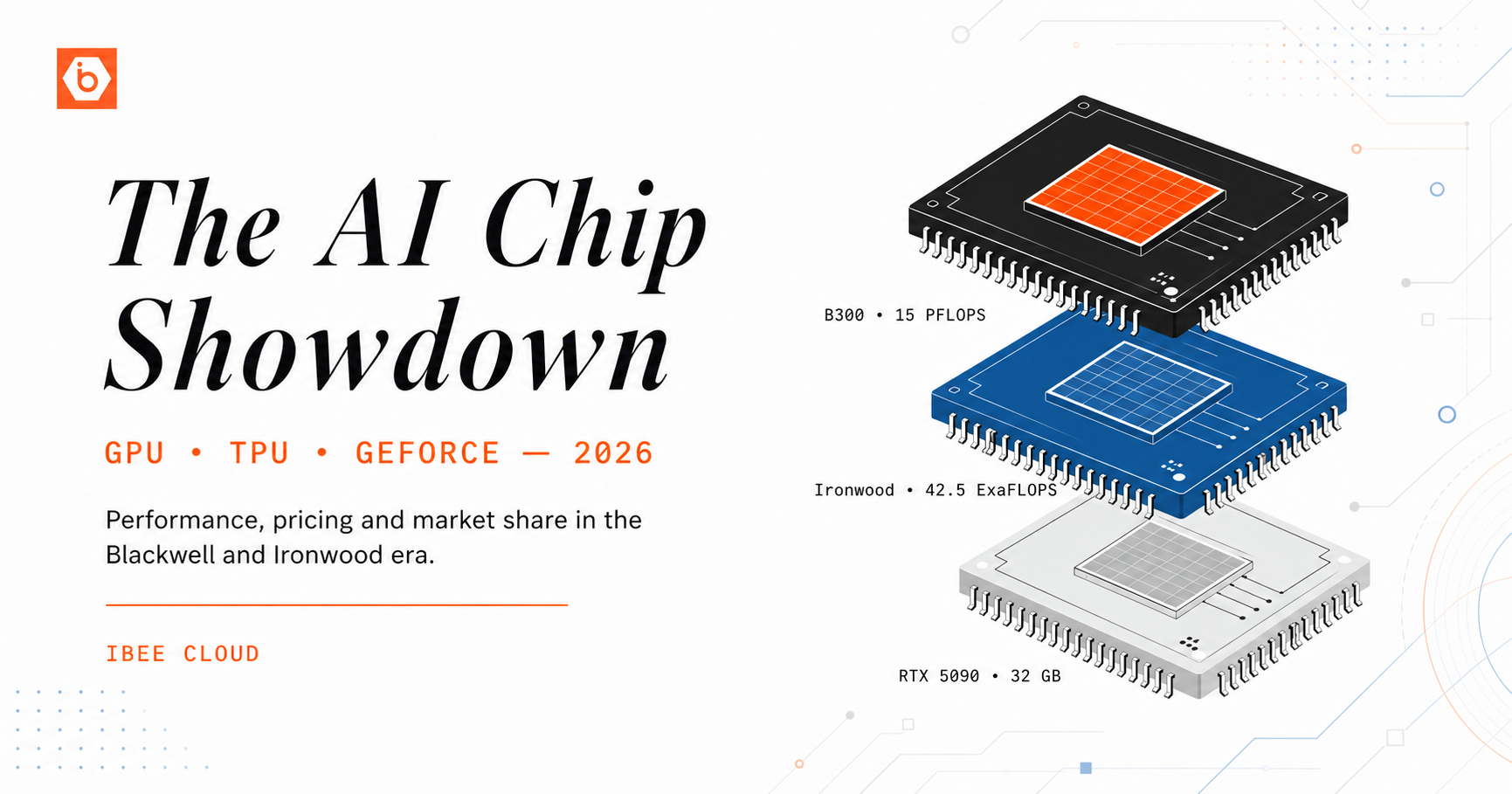

- NVIDIA's data-centre GPU line has moved through three generations in quick succession. Hopper (H100 and H200) remains the most widely deployed. Blackwell (B100, B200, and the B300 Blackwell Ultra) is now shipping at volume. Rubin, the next architecture, is expected in the second half of 2026.

- The H100 delivers up to 3,958 TFLOPS in FP8 sparse mode with 80 GB of HBM3 at 3.35 TB/s bandwidth. The H200 extends that to 141 GB of HBM3e, removing memory-bound bottlenecks for models that exceed 80 GB. The B200 steps up to 192 GB of HBM3e at 8 TB/s bandwidth and 9,000 TFLOPS of FP4 Tensor performance, roughly 4x the inference throughput of the H100. The B300, which began shipping in January 2026, doubles that again: 288 GB of HBM3e at 8 TB/s and 15 petaFLOPS of dense FP4 compute per chip, with 1,400W TDP requiring direct liquid cooling. Looking further ahead, the Rubin NVL144 platform is targeting 8 exaflops of AI performance and 100 TB of fast memory in a single rack.

- The critical advantage that no spec sheet fully captures is CUDA's software ecosystem. Over two decades of accumulated libraries, frameworks, and developer tooling mean that virtually every AI research paper, every open-source model release, and every production inference framework defaults to CUDA. PyTorch, Hugging Face, vLLM, TensorRT, and the entire stack used in production AI are CUDA-first. Switching away from this ecosystem carries a real and measurable cost.

Google TPUs: From Efficiency Engine to Inference Platform

- Google's Tensor Processing Units are ASICs built from the silicon up to run tensor operations using a systolic array architecture. The current production generation is TPU v7, known as Ironwood, which reached general availability at Google Cloud Next on April 22, 2026.

- Ironwood delivers 4,614 FP8 TFLOPS per chip with 192 GB of HBM3e at 7.37 TB/s bandwidth. At pod scale, 9,216 Ironwood chips deliver 42.5 FP8 exaFLOPS of compute, which is roughly 24x more than the world's largest GPU-based supercomputer. Google describes Ironwood as a 10x peak performance improvement over TPU v5p and more than 4x better performance per chip than TPU v6e (Trillium) for both training and inference. The all-in total cost of ownership per Ironwood chip, in a full 3D Torus configuration, is estimated to be roughly 44% lower than an equivalent GB200 server.

- Simultaneously with Ironwood's general availability, Google announced its eighth-generation TPU architecture: TPU 8t (codenamed Sunfish), a Broadcom-designed training chip, and TPU 8i (codenamed Zebrafish), a MediaTek-designed inference-only chip. Both target TSMC's 2-nanometre process and are slated for late 2027. This is the first time Google has purpose-built separate training and inference chips, a significant architectural bifurcation that signals where the industry is heading.

- Anthropic is Ironwood's marquee customer, with access to up to one million TPU chips as part of a deal that has since expanded to 3.5 gigawatts of compute coming online in 2027. Meta has also been confirmed as a large TPU customer. Google projects 4.3 million TPU shipments in 2026, rising to 10 million in 2027 and more than 35 million in 2028. That ramp is unprecedented for custom silicon outside of NVIDIA.

- TPUs remain available exclusively through Google Cloud. There is no TPU hardware to purchase, and there is no TPU to run outside of Google's infrastructure.

NVIDIA GeForce: The Developer's Practical GPU

- GeForce cards share NVIDIA's CUDA architecture with the data-centre line but are manufactured for desktop environments rather than 24/7 data-centre operation.

- The RTX 4090 launched in 2022 at $1,599 MSRP and in 2026 remains a relevant option: 24 GB GDDR6X at approximately 1 TB/s bandwidth, 16,384 CUDA cores, and 82.6 TFLOPS FP32. The RTX 5090, launched in January 2025 at $1,999 MSRP on NVIDIA's Blackwell consumer architecture, upgrades to 32 GB GDDR7 at 1.79 TB/s bandwidth and 21,760 CUDA cores, delivering roughly 30 to 40% better AI throughput over the 4090. Cloud rental of the RTX 5090 has fallen sharply: from $0.89/hr at launch to approximately $0.49/hr on-demand as of April 2026, a 45% decline in twelve months.

- The gap between GeForce and data-centre GPUs is memory capacity, reliability design, and compliance. The H100 offers 80 GB versus the RTX 5090's 32 GB. Data-centre GPUs use HBM with higher bandwidth and ECC error-correcting memory. NVIDIA's GeForce EULA technically prohibits consumer cards in data-centre production environments, though this is unevenly enforced in smaller deployments.

- One market signal worth noting: NVIDIA made a deliberate decision not to release an RTX 60-series consumer GPU generation. The economics of shipping Blackwell racks to hyperscalers at data-centre margins are so favourable compared to consumer GPU revenue that the consumer GPU line has become a secondary priority. The RTX 5090 may be the last consumer GPU that represents a meaningful performance inflection for several years.

Part 2 — Performance Numbers That Actually Matter

Training: NVIDIA Data-Centre GPUs Still Dominate

- For large-model training, pretraining foundation models and fine-tuning LLMs with billions of parameters across multi-GPU clusters, NVIDIA data-centre GPUs hold a structural advantage. NVLink enables high-bandwidth multi-GPU communication within a server. InfiniBand and Spectrum-X Ethernet connect nodes at scale. The tooling for distributed training across hundreds of H100s or B200s is battle-tested.

- Ironwood has a credible counter-argument at sufficient scale. A full Ironwood pod at 42.5 exaFLOPS exceeds any GPU-based system available commercially. But the prerequisite is framework compatibility: training pipelines must be written in JAX or TensorFlow to access that performance. The cost of migrating from PyTorch to JAX is a significant engineering investment. For most teams below frontier-model scale, the training ecosystem is CUDA, and rewriting to access TPU economics is hard to justify.

Inference: Where the 2026 Economics Are Shifting Fastest

- Inference, serving a trained model to answer real user requests, is where the competitive picture has moved most dramatically in the past twelve months. By 2030, inference is projected to consume 75% of all AI compute.

- Ironwood has demonstrated 4x better performance per chip for inference than Trillium (v6e), which itself had already shown 4x better price-performance than H100 for large-batch LLM inference. The evidence from the Trillium generation is not hypothetical: Midjourney migrated from NVIDIA clusters to TPU v6e and reduced monthly inference spending from $2.1 million to $700,000, a 67% reduction. Stability AI moved 40% of its image generation inference to TPU v6 in 2025. A computer vision startup that sold 128 H100 GPUs and redeployed on TPU v6e saw monthly bills fall from $340,000 to $89,000.

- With Ironwood now generally available and offering another 4x improvement over Trillium, the economics for large-batch TensorFlow and JAX inference workloads have moved further in Google's direction. The caveat remains specificity: TPU advantages materialise on TensorFlow and JAX models, large-batch workloads, and production inference at scale. For PyTorch inference, smaller batches, or mixed-workload environments, the picture is less definitive.

- For smaller models, GeForce cards remain highly competitive. The RTX 5090 at 32 GB handles most 7B to 13B models comfortably. The RTX 4090 at 24 GB handles the same workloads with quantisation. For teams serving small models or running local inference for development, these options deliver adequate performance at a fraction of the enterprise cost.

Memory: The Actual Constraint

- The most decisive performance variable for AI inference is not TFLOPS. It is memory capacity and bandwidth. A model must fit entirely in GPU memory to run efficiently. The H100 offers 80 GB. The H200 141 GB. The B200 192 GB. The B300 288 GB. Ironwood offers 192 GB per chip. The RTX 5090 offers 32 GB. The RTX 4090 24 GB.

- For models above 32 GB, which includes 70B parameter LLMs in FP16, frontier multimodal models, and large-batch inference, there is no GeForce alternative without multi-GPU sharding, which adds system complexity and cost. For models that fit within 32 GB, the RTX 5090 handles them directly and is the most cost-effective single GPU for that workload profile.

Part 3 — Pricing: What Things Actually Cost in Mid-2026

NVIDIA Data-Centre GPUs

- Hardware prices remain elevated relative to manufacturing cost. The B200 lists at $35,000 to $40,000 per unit, with Epoch AI estimating a manufacturing cost of approximately $6,400. The B300 Blackwell Ultra carries a list price in the same $35,000 to $40,000 range per unit, with DGX B300 system pricing (8 GPUs) at approximately $300,000 to $350,000. The H100 hardware remains at $25,000 to $40,000 depending on configuration.

- Cloud pricing has compressed significantly as supply has expanded. H100 cloud rental is now $1.25 to $3.36/hr across 42 providers, with the average sitting around $3.12/hr on-demand. H200 instances run $2.37 to $4.54/hr. B200 cloud pricing ranges from $2.25 to $16/hr depending on provider and billing model, with reserved instances as low as $2.25/hr on 36-month terms and spot pricing as low as $2.12/hr on platforms like Spheron. B300 spot pricing has appeared as low as $2.45/hr on Spheron, undercutting comparable H200 rates despite being the newest generation. A 3.6 million unit B200 backlog persists through mid-2026, making cloud rental faster to access than hardware purchase for most teams.

Google TPUs (Cloud Rental Only)

- TPU v5e costs approximately $1.20 per chip-hour at on-demand rates. TPU v4 runs around $3.22/hr and v5p approximately $4.20/hr. Reserved pricing drops these significantly: v6e reserved plans can reach $0.39 per chip-hour. Ironwood (v7) pricing has not been published at on-demand rates for general customers as of this writing; access is currently through reservation and committed use agreements with Google Cloud. Given Ironwood's 44% lower TCO estimate versus GB200 at equivalent scale, the economics are likely to be compelling once broadly available.

NVIDIA GeForce

- The RTX 4090 retails at approximately $1,500 to $1,800 for new units in 2026. The RTX 5090 carries an MSRP of $1,999 with street prices above that in constrained markets. Cloud rental has fallen: the RTX 5090 is now available at approximately $0.49/hr on-demand and $0.13/hr on spot instances. The RTX 4090 rents for $0.39 to $0.69/hr. For prototyping, fine-tuning a 7B model with LoRA, or evaluating approaches, spending $10 to $25 on cloud GPU time per experiment versus $200 or more on an H100 for the same task is a meaningful difference for early-stage teams.

Each category serves a different workload profile.

Part 4 — Market Share in 2026

- NVIDIA commands approximately 80% of the AI accelerator market by revenue. Data-centre GPU revenue has become the company's primary business by a wide margin, with the consumer gaming segment now representing roughly 8% of revenue compared to 35% four years ago. NVIDIA has estimated it will sell a total of $1 trillion worth of chips based on Blackwell and the upcoming Vera Rubin architectures across 2026 and 2027.

- AMD is the largest merchant GPU competitor with 5 to 8% of the AI accelerator market. The MI350 series has been AMD's fastest-ramping product in company history. The MI455X, the flagship of the MI400 series, features 432 GB of HBM4 memory and 19.6 TB/s bandwidth and is targeting shipping in Q3 2026 in rack-scale Helios configurations. AMD has secured major partnerships: a custom MI450 GPU co-engineered with Meta and an OpenAI partnership, with combined committed deployments of 12 gigawatts. AMD's data-centre revenue for full-year 2025 was $16.64 billion, up 32%, and the company is targeting double-digit market share gains by 2027.

- TPUs hold approximately 13% of the AI chip market by revenue, led by Google's internal deployments and growing external adoption. Google's projected 4.3 million TPU shipments in 2026 and the Ironwood GA announcement signal that TPU is no longer a purely internal Google infrastructure play. Broadcom, which designs Ironwood under an agreement running through 2031, is anticipating $100 billion in AI chip revenue from ASICs next year.

- Hyperscaler custom ASICs, including Amazon Trainium, Microsoft silicon, and Google TPUs, are collectively targeting 10 to 15% of the total AI chip market by 2026.

- The forward trajectory that matters most: NVIDIA's share at the inference layer is expected to decline as custom ASICs and TPUs capture production inference workloads. NVIDIA is likely to maintain near-total dominance in training, where CUDA's ecosystem advantage and multi-GPU tooling remain structural. The strategic question for AI companies is which layer of the stack they are primarily operating in.

Part 5 — The Decision Framework

- For AI startups building and shipping products, the practical answer is GeForce cards for development and H100s or TPUs for production, rented as needed rather than purchased. An RTX 5090 at $1,999 or rented at under $0.50/hr is the right environment for prototyping, fine-tuning smaller models, and evaluating approaches. When the workload moves to production at scale, the right choice between H100 or B200 cloud rental and Ironwood depends almost entirely on the framework (PyTorch favours GPU; TensorFlow and JAX favour TPU) and the inference batch profile (large, consistent batches favour TPU economics).

- For data platforms building on open-source models, platforms running continuous inference on large models at significant scale are the clearest candidates for TPU economics. Ironwood's 4x performance-per-chip improvement over Trillium, combined with Trillium's already-demonstrated 67% cost reduction versus H100 for large-batch LLM inference, translates directly to margin at production scale. The prerequisites are framework compatibility and Google Cloud commitment. For platforms built on PyTorch without migration budget, H100 or B200 remain the default, with B300 as the upgrade path for memory-bound workloads.

- For AI agencies serving diverse client workloads, flexibility matters more than optimisation for a single workload. An H100 or B200 cloud rental handles any PyTorch or TensorFlow model without framework constraints. A pool of RTX 5090s handles most fine-tuning and small-batch inference at under $0.50/hr. Ironwood access is valuable for specific high-volume inference clients who have locked into Google Cloud. The right architecture for an agency is usually a mix: consumer GPUs for development work, H100 or B200 rentals for training jobs, and Ironwood or TPU access for production inference at clients who have crossed the scale threshold where it matters.

What to Watch in the Next 12 Months

- Several things are moving simultaneously. NVIDIA's Vera Rubin architecture is targeting the second half of 2026, with the Rubin NVL144 platform promising 8 exaflops in a single rack. AMD's MI455X Helios rack-scale system ships in Q3 2026, giving GPU users a credible third option at competitive pricing without NVIDIA's supply constraints. Google's Ironwood availability is expanding through 2026 as the 4.3 million unit production ramp proceeds. Google's eighth-generation TPU, split into dedicated training (TPU 8t) and inference (TPU 8i) chips on TSMC 2nm, is targeting late 2027 and signals the longest TPU roadmap Google has made public.

- The macro trend is clear: inference economics are driving hardware choice at production scale, and custom silicon is winning that cost argument on qualifying workloads. NVIDIA's training dominance is not under threat. NVIDIA's inference dominance is, and the pace of that erosion accelerated in 2026 with Ironwood going to general availability and AMD's MI400 series entering the market.

- The teams that build framework-agnostic inference pipelines today, using abstraction layers that allow workloads to move between CUDA and non-CUDA hardware, will have the most flexibility to capture those economics as they continue to shift.

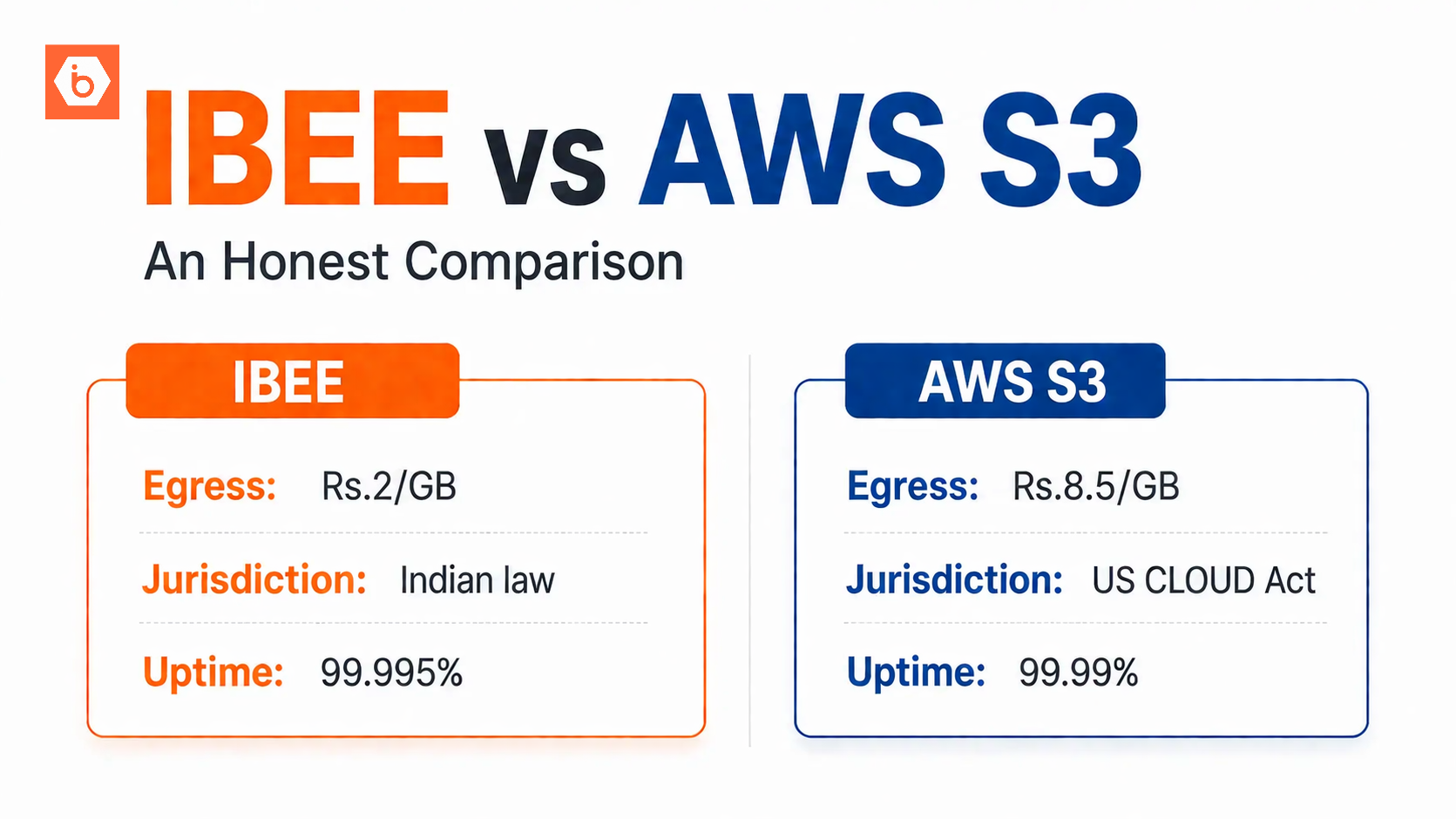

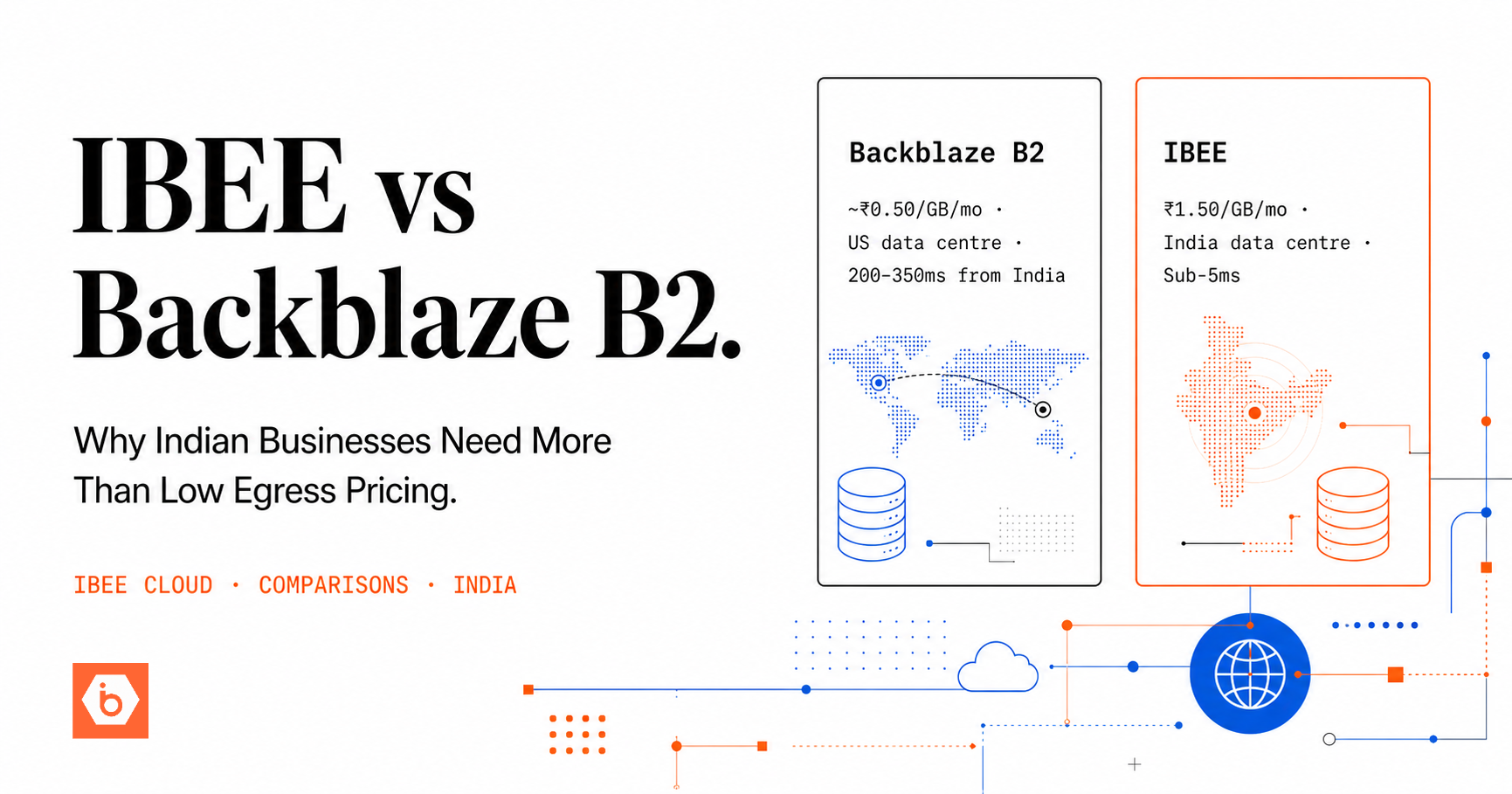

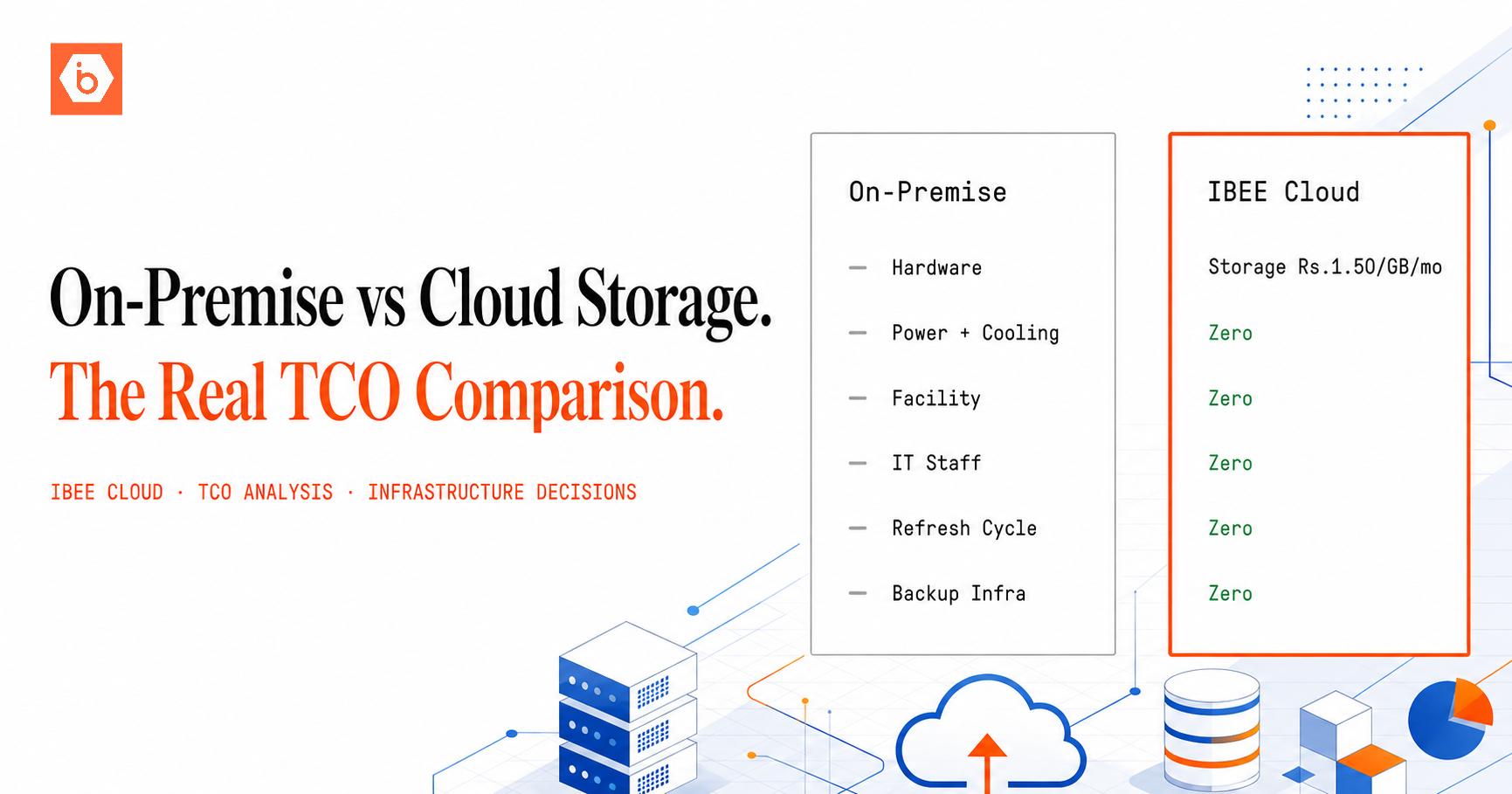

- IBEE provides India-sovereign GPU compute and object storage for AI infrastructure. For AI startups and data platforms evaluating their storage and compute stack, our team can help assess the right architecture for your workload and scale.